In 2026, mobile OCR is no longer just a tool for extracting text from images. It has evolved into an intelligent perception layer that understands context, layout, and meaning in real time. For gadget enthusiasts watching the rapid convergence of AI and hardware, this shift marks one of the most exciting frontiers in mobile innovation.

Japanese text recognition has long been considered one of the toughest challenges in OCR, due to its mix of kanji, hiragana, katakana, alphanumeric characters, and vertical writing. Today, Vision-Language Models (VLMs) combined with powerful on-device NPUs are pushing accuracy to near-human levels, even in noisy, low-light, or complex document scenarios. Smartphones and smart glasses are transforming into always-on AI scanners that augment how we see and interpret the world.

In this article, you will discover how flagship devices like iPhone 17 Pro, Pixel 10 Pro, and Galaxy S26 enhance OCR through advanced imaging pipelines, how Apple Live Text and Google Lens compete at the platform level, and how AI-OCR is saving tens of thousands of work hours in real businesses. We will also explore cultural applications such as AI-powered kuzushiji recognition and examine the privacy, security, and hardware challenges shaping the next phase of mobile AI.

- From Text Extraction to Context Understanding: The Paradigm Shift in OCR

- Why Japanese Is the Ultimate OCR Stress Test

- How Vision-Language Models Eliminate Ambiguity in Real-World Documents

- Flagship Smartphone Cameras as AI-Optimized Scanning Sensors

- Smart Glasses and Real-Time AR Translation: OCR Beyond the Smartphone

- On-Device AI, NPUs, and the End of Cloud Dependency

- Enterprise Impact: AI-OCR Saving 60,000+ Work Hours a Year

- Cultural Preservation: AI Decoding Historical Kuzushiji Manuscripts

- Apple Live Text vs Google Lens: Platform-Level AI Competition in 2026

- Accuracy, Risk, and the 0.1% Problem in High-Stakes Documents

- Rising Memory Costs, AI Security, and the Next Bottlenecks

- 参考文献

From Text Extraction to Context Understanding: The Paradigm Shift in OCR

In 2026, mobile OCR is no longer just a tool that converts pixels into characters. It has evolved into a system that understands why those characters exist in a specific place and what they mean within a broader structure.

Traditional OCR relied heavily on edge detection and pattern matching. It compared detected shapes with pre-trained font templates and output the closest match. This worked reasonably well for clean, standardized documents, but it struggled with noisy images, mixed scripts, and complex layouts.

The real breakthrough comes from the integration of Vision Language Models (VLMs), which fuse computer vision and natural language processing into a single contextual engine.

From Shape Recognition to Meaning Inference

Japanese text presents a uniquely difficult challenge: kanji, hiragana, katakana, and alphanumeric characters often coexist on the same page, sometimes arranged both vertically and horizontally. Earlier OCR systems frequently misread visually similar characters such as “0” and “O,” or “エ” and “工.”

VLM-powered OCR addresses this by analyzing surrounding words, document structure, and semantic probability. According to CambioML’s 2026 analysis on VLM-based OCR, modern systems statistically infer the most plausible character based on contextual coherence rather than visual similarity alone.

For example, in a financial statement, a circular glyph appearing in a column of monetary values is interpreted as “0” with high confidence. The same shape embedded within a company name is recognized as “O.” The system effectively mirrors human contextual reasoning.

| Aspect | Early 2020s OCR | VLM-Based OCR (2026) |

|---|---|---|

| Core Logic | Pattern matching | Context and semantic inference |

| Ambiguous Characters | Shape-only decision | Probability-based contextual selection |

| Mixed Scripts | Model switching required | Seamless unified processing |

| Layout Handling | Limited to fixed formats | Understands structural relationships |

Understanding Document Structure, Not Just Text

Another fundamental shift lies in structural comprehension. Modern business documents contain tables, annotations, charts, and nested sections. Earlier OCR often flattened these elements into disordered text streams.

VLM-based systems interpret spatial hierarchy: which text belongs to which cell, which header governs which column, and how captions relate to figures. This structural awareness preserves meaning during extraction, enabling downstream automation to function reliably.

In financial, HR, and legal workflows where non-standard forms are common, this capability is transformative. The system does not merely read characters; it recognizes roles, relationships, and metadata embedded within the layout.

This shift also improves resilience to imperfect inputs. When images are slightly blurred or partially occluded, contextual modeling compensates for missing visual cues. Instead of failing outright, the model reconstructs likely text through semantic continuity.

Industry observers have begun describing this evolution as a move from text extraction to perception infrastructure. Rather than acting as a passive converter, OCR now behaves as an interpretive layer between the physical world and digital systems.

For gadget enthusiasts and power users, this means the camera is no longer just a capture device. It becomes a semantic sensor—one that reads, understands, and prepares information for action in real time.

Why Japanese Is the Ultimate OCR Stress Test

Japanese is widely regarded by engineers as one of the most demanding environments for optical character recognition.

It is not simply a matter of “more characters.” It is the structural, visual, and contextual complexity of the writing system that turns Japanese into a proving ground for next-generation OCR.

According to analyses of Vision Language Models by CambioML, Japanese forces AI systems to move beyond pattern matching and into true contextual understanding.

A single document may contain kanji, hiragana, katakana, Latin letters, and Arabic numerals.

Unlike alphabetic languages, kanji alone number in the thousands for daily use, each with intricate stroke patterns that must be distinguished under noise, blur, or distortion.

This dramatically expands the classification space compared to languages that rely on a few dozen symbols.

| Challenge | Why It Is Difficult | Impact on OCR |

|---|---|---|

| Multiple scripts | Kanji + kana + Latin mixed | Requires script switching without model reset |

| Visual similarity | Characters like エ and 工 | High misrecognition risk without context |

| Mixed orientation | Vertical and horizontal text coexist | Reading order detection becomes critical |

| Dense layouts | Tables, stamps, annotations | Structure preservation is required |

Character ambiguity is another severe stress factor.

For example, the numeral 0 and the letter O are visually similar, and katakana エ can resemble the kanji 工 depending on font weight and resolution.

Traditional OCR systems that rely primarily on shape comparison frequently fail in these edge cases.

Japanese documents also shift writing direction fluidly.

Books, contracts, and official forms may use vertical text, while embedded tables and side notes switch to horizontal alignment.

An OCR engine must detect not only characters but also reading flow, which becomes exponentially harder when layouts are non-standard.

The Information-technology Promotion Agency of Japan has repeatedly highlighted the complexity of Japanese administrative forms in digital transformation efforts.

Non-standardized fields, personal seals, and handwritten additions introduce further variability.

This makes Japanese business documents particularly unforgiving as benchmark datasets.

In 2026, Vision Language Models mitigate these stress factors by interpreting semantic context rather than isolated glyphs.

Instead of asking “What shape is this?” the model asks “What word logically fits here?”

This shift from visual decoding to contextual reasoning is precisely what Japanese demands.

Because of these layered challenges, success in Japanese OCR often signals robustness across other languages.

If a system can accurately parse mixed scripts, ambiguous glyphs, vertical layouts, and dense tables simultaneously, it demonstrates resilience under extreme linguistic conditions.

That is why, in the AI community, Japanese is not just another language—it is the ultimate OCR stress test.

How Vision-Language Models Eliminate Ambiguity in Real-World Documents

Vision-Language Models fundamentally change how OCR systems deal with ambiguity in real-world documents.

Traditional OCR relies on visual pattern matching. It compares pixel shapes to learned character templates and outputs the closest match.

VLMs, by contrast, interpret text as part of a broader semantic and visual context, enabling them to resolve uncertainty the way humans naturally do.

According to CambioML, VLM-based systems integrate computer vision and natural language understanding into a single inference pipeline. This means the model does not simply “see” characters; it understands relationships between words, layout structures, and document intent.

When a character is visually ambiguous, the model evaluates surrounding tokens, positional patterns, and document type before making a decision.

This contextual reasoning dramatically reduces misrecognition in noisy or complex environments.

| Ambiguous Case | Traditional OCR | VLM-Based OCR |

|---|---|---|

| “0” vs “O” | Shape similarity only | Uses numeric column context |

| カタカナ「エ」 vs 漢字「工」 | Stroke comparison | Analyzes sentence semantics |

| Mixed Japanese/English text | Model switching required | Unified multilingual inference |

For example, in financial statements, circular glyphs aligned in numeric columns are statistically interpreted as zero. In company names or addresses, the identical shape is interpreted as the letter “O.”

The decision is not visual alone but probabilistic and semantic, combining layout, frequency distribution, and linguistic plausibility.

This reduces downstream correction costs and prevents cascading data errors.

Ambiguity also appears in document structure. Real-world documents contain tables, charts, annotations, and irregular formatting.

Earlier OCR systems often flattened these into plain text, losing structural meaning.

VLM-based models preserve hierarchical relationships between headers, cells, and explanatory notes.

In invoice processing or HR documents, this structural awareness allows the system to distinguish between item totals, tax fields, and reference numbers—even when formats vary.

Industry reports in 2026 indicate that combining contextual inference with human double-check workflows pushes practical accuracy toward 99.9% in enterprise environments.

The key improvement lies not in sharper character detection, but in contextual disambiguation.

Noise tolerance is another area where ambiguity is eliminated. Blurred images, uneven lighting, or partial occlusion previously led to character-level failure.

VLMs compensate by predicting missing or degraded tokens using surrounding meaning.

This mirrors how humans read partially smudged text without conscious effort.

Crucially, the model evaluates both visual signals and linguistic coherence simultaneously.

Rather than asking “What shape is this?”, it asks “What makes sense here?”

That shift—from pattern recognition to contextual reasoning—is what allows modern OCR to operate reliably in messy, real-world conditions.

Flagship Smartphone Cameras as AI-Optimized Scanning Sensors

By 2026, flagship smartphones have effectively transformed their camera systems into AI-optimized scanning sensors. Devices such as iPhone 17 Pro, Pixel 10 Pro, and Galaxy S26 no longer capture images merely for photography; they generate computationally refined visual data tailored for downstream AI inference, including advanced OCR powered by Vision Language Models.

The camera is now the first stage of the AI pipeline. Before any VLM interprets text, the imaging stack has already enhanced contrast, reduced noise, and reconstructed fine details to maximize recognition accuracy.

AI-Driven Image Conditioning for OCR

| Device | Imaging Strength | OCR Impact |

|---|---|---|

| Galaxy S26 | Advanced HDR optimization | Improved character contrast in harsh lighting |

| Pixel 10 Pro | AI detail enhancement in zoom/low light | Recovery of small or distant text |

| iPhone 17 Pro | High optical fidelity, minimal overprocessing | Preservation of micro-texture for VLM analysis |

According to reporting by ITmedia, recent flagship models emphasize computational photography tuned not only for aesthetics but also for machine readability. For example, enhanced HDR pipelines suppress highlight clipping and shadow crushing, ensuring that printed characters maintain clear edge boundaries even under strong office lighting.

Pixel’s AI-based super-resolution techniques play a critical role when scanning receipts or signage from a distance. By reconstructing high-frequency detail, the device provides cleaner glyph contours to the recognition engine. This directly reduces ambiguity between visually similar characters, a key issue in multilingual contexts.

Meanwhile, Apple’s imaging philosophy prioritizes optical accuracy and controlled processing. By avoiding aggressive sharpening artifacts, the camera preserves subtle stroke variations that VLM-based OCR systems rely on for contextual disambiguation.

Input quality determines inference quality. Even the most advanced on-device VLM cannot compensate for severe motion blur or blown-out highlights. The 2026 flagship stack mitigates these risks through multi-frame fusion, real-time exposure bracketing, and AI-driven noise modeling.

Ultra-wide cameras have also matured significantly. Reviews highlighted by Watch Impress note improved low-light performance across lenses, enabling users to scan large-format documents, posters, or whiteboards in a single frame without sacrificing corner clarity. For enterprise workflows, this reduces the need for manual cropping and rescanning.

The result is a shift in perception: users are no longer “taking a photo of text.” They are activating a sensor array optimized for semantic capture. The camera preprocesses the physical world into structured visual signals, ready for immediate interpretation by on-device AI.

In practical terms, this means faster scan cycles, fewer recognition errors, and higher trust in mobile document capture. Flagship smartphones in 2026 function as portable, AI-calibrated scanners—bridging optics and neural computation in a tightly integrated system designed for real-world, high-precision text acquisition.

Smart Glasses and Real-Time AR Translation: OCR Beyond the Smartphone

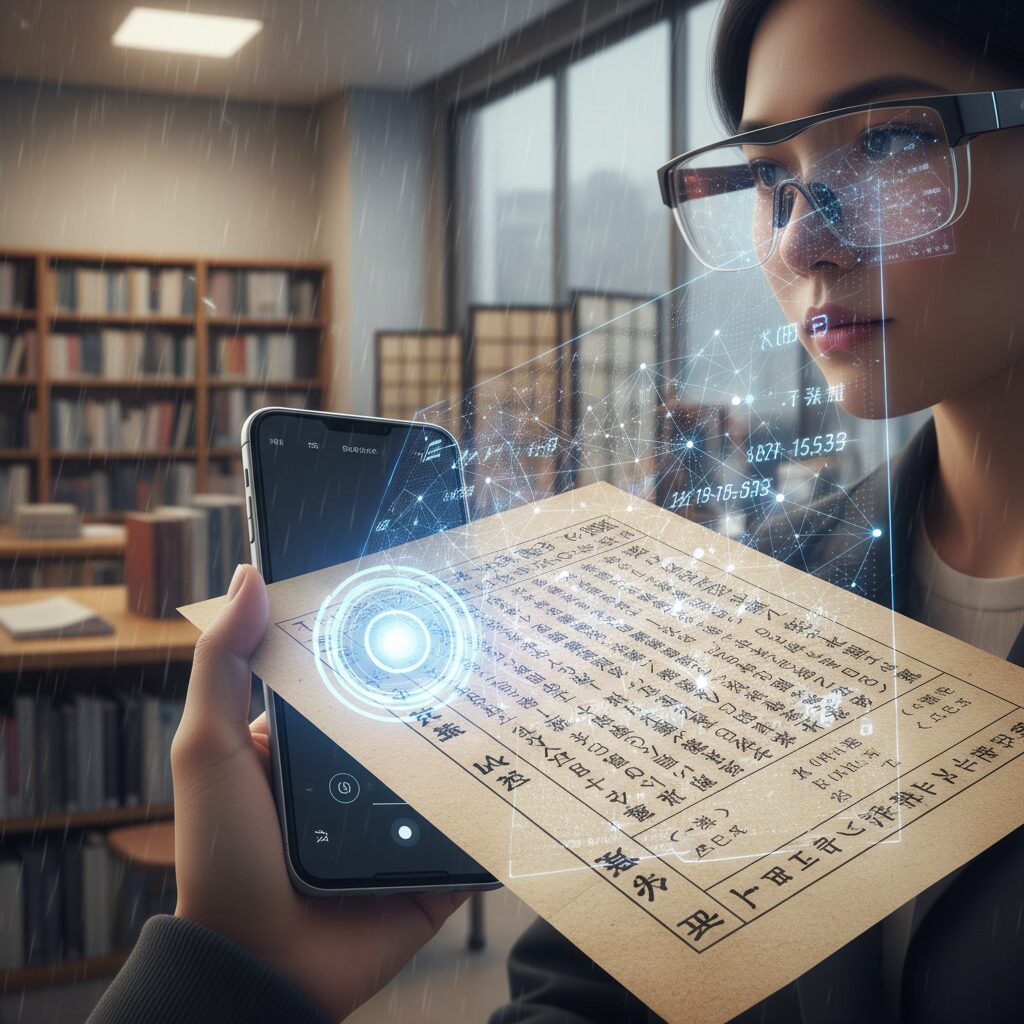

Smart glasses are transforming OCR from a handheld utility into a continuous, real-world interface. In 2026, the combination of on-device AI and Vision Language Models (VLMs) enables glasses to not only detect text in the field of view, but to interpret its meaning and instantly translate it within the user’s visual context. What used to require taking out a smartphone, opening an app, and framing a shot now happens seamlessly as you look around.

According to industry analysis presented at CES 2026, the shift toward “Invisible AI” is redefining user expectations. Instead of actively querying a device, users rely on ambient intelligence that anticipates intent. In smart glasses, OCR operates persistently in the background, recognizing signage, menus, manuals, and labels without explicit input. The interface disappears, and perception itself becomes augmented.

Devices such as camera-equipped smart glasses inspired by the Ray-Ban Meta line demonstrate how live translation has become mainstream. When a user looks at a train timetable in a foreign language, the system detects text regions, performs semantic parsing through a lightweight VLM, and overlays translated text directly onto the original layout. Because processing is largely on-device, latency is nearly imperceptible, preserving the natural rhythm of conversation and movement.

The technical architecture differs significantly from smartphone-based OCR workflows. Instead of capturing a static image for later processing, smart glasses rely on continuous video streams and edge-optimized inference pipelines. The system must balance three constraints simultaneously: power efficiency, thermal limits, and visual clarity in AR overlays. Advances in compact NPUs and model quantization make it possible to run VLM-based recognition locally without cloud dependency, a shift emphasized in multiple 2026 AI trend reports.

| Aspect | Smartphone OCR | Smart Glasses AR OCR |

|---|---|---|

| User Interaction | Active capture and tap | Passive, gaze-based detection |

| Processing Mode | Single image analysis | Continuous video stream inference |

| Latency Sensitivity | Moderate | Extremely high (must feel instant) |

| Primary Value | Text extraction | Context-aware visual augmentation |

Display technology is equally critical. Reviews of next-generation AR glasses equipped with high-brightness micro or mini-LED panels highlight significant gains in readability under daylight conditions. High pixel density ensures that translated overlays align precisely with physical text, preventing cognitive dissonance. Even slight misalignment can break immersion, so spatial mapping algorithms continuously recalibrate position and depth.

One of the most compelling use cases is travel. A restaurant menu written entirely in Japanese can be translated into English while preserving layout hierarchy—headings, prices, and notes remain visually structured. Because VLMs understand document semantics, the system distinguishes between dish names, allergen warnings, and promotional text. This contextual awareness reduces translation errors that earlier OCR engines frequently produced when handling mixed scripts or stylized fonts.

Industrial applications are equally transformative. In logistics or manufacturing environments, workers wearing AR glasses can view translated safety instructions or part numbers superimposed directly onto machinery. Since many facilities restrict cloud connectivity for security reasons, on-device inference becomes essential. As cybersecurity experts note in 2026 trend analyses, minimizing data transmission reduces exposure risk and supports compliance in sensitive environments.

Another critical advantage is conversational augmentation. During cross-border meetings, smart glasses can transcribe and translate printed materials in real time while simultaneously supporting spoken translation features. This multimodal fusion—visual OCR plus audio processing—relies on compact VLM architectures that unify text and image understanding. The result feels less like using a tool and more like gaining a new cognitive capability.

Privacy remains a defining factor. Because smart glasses continuously analyze the environment, public concern about data capture is unavoidable. The industry response has centered on edge processing and visible recording indicators. By ensuring that text recognition occurs locally and ephemeral data is not stored or transmitted, manufacturers align with broader 2026 shifts toward on-device AI emphasized by leading technology commentators.

Energy management is the quiet constraint shaping innovation. Continuous OCR and translation demand constant camera input and inference cycles. Engineers mitigate this through event-driven activation, low-power vision co-processors, and adaptive frame-rate control. When no readable text is detected, the system scales down processing intensity. This dynamic optimization allows all-day wear without excessive battery bulk.

The most profound change is psychological rather than technical. When users no longer need to “scan” text intentionally, language barriers begin to feel less absolute. Signboards in airports, instructions in museums, and packaging in foreign supermarkets become immediately legible. The friction of curiosity diminishes, encouraging exploration rather than hesitation.

As Vision Language Models continue to improve contextual reasoning, smart glasses will move beyond literal translation toward summarization and intent guidance. Instead of translating every line of a complex notice, the system may highlight the key action required—such as a deadline or restriction—based on inferred user goals. This evolution represents OCR’s transition from character recognition to situational intelligence.

In this new paradigm, the smartphone is no longer the primary gateway for visual text interaction. Smart glasses reposition OCR as an always-on perceptual layer, merging recognition, translation, and semantic interpretation into a single embodied experience. The boundary between reading and understanding grows thinner, and augmented reality becomes a practical extension of human sight.

On-Device AI, NPUs, and the End of Cloud Dependency

In 2026, the shift to on-device AI is no longer a technical trend but a structural turning point for mobile OCR. Tasks that once required powerful cloud GPUs are now executed directly on smartphones and smart glasses through dedicated NPUs (Neural Processing Units). As reported in coverage of CES 2026, the center of gravity has clearly moved from “connect and process” to “process instantly on the spot.”

This transition fundamentally reduces cloud dependency, redefining privacy, latency, and even business risk. Instead of uploading sensitive images to remote servers, devices analyze text locally, complete inference internally, and return results in real time.

| Aspect | Cloud-Based OCR | On-Device NPU OCR |

|---|---|---|

| Processing Location | Remote data center | Local device |

| Latency | Network dependent | Near-instant |

| Privacy Risk | Data transmitted externally | Data stays on device |

| Offline Capability | Limited | Fully functional |

The implications are especially profound for high-sensitivity environments. Industrial handheld terminals running dedicated on-device OCR apps, such as those designed for logistics and manufacturing sites, are built to function even without network connectivity. In hospitals, underground facilities, or secure warehouses, this offline autonomy is not convenience but necessity.

According to industry analyses on Invisible AI, user expectations have shifted toward systems that act without explicit commands. That level of responsiveness is only feasible when inference happens locally. Even a few hundred milliseconds of cloud round-trip delay would break the illusion of seamless augmentation in AR glasses or live translation overlays.

NPUs are the silent enablers of this experience. Unlike general-purpose CPUs or GPUs, NPUs are optimized for matrix operations central to neural networks. This specialization allows complex VLM-based OCR models to run efficiently within the thermal and battery constraints of mobile devices. The result is sustained real-time recognition without rapid battery drain.

Privacy is another decisive factor. When meeting notes, contracts, or personal journals are processed entirely on-device, organizations avoid the compliance risks associated with external data transfer. Cybersecurity experts have emphasized the growing importance of DSPM and identity security in AI-driven workflows. Reducing cloud exposure simplifies that security posture from the outset.

Importantly, this does not mean the cloud disappears. Instead, dependency shifts from constant reliance to selective augmentation. Heavy model training and large-scale updates may still occur in data centers, but day-to-day inference increasingly resides in users’ pockets.

The end of cloud dependency does not eliminate the cloud; it restores control to the edge. For gadget enthusiasts and enterprise adopters alike, the real breakthrough is not just faster OCR, but sovereignty over data, context, and computation—executed instantly, privately, and wherever the device happens to be.

Enterprise Impact: AI-OCR Saving 60,000+ Work Hours a Year

For large enterprises, AI-OCR in 2026 is no longer a marginal efficiency tool but a structural lever for workforce transformation. In Japan, where labor shortages continue to intensify, automation of document-heavy operations has become a board-level priority.

According to reported implementation cases of solutions such as DX Suite, some organizations have successfully reduced nearly 60,000 work hours per year through AI-OCR adoption. This figure is not theoretical. It reflects actual operational data from companies that digitized high-volume paperwork flows.

60,000 hours annually equals roughly 30 full-time employees’ workload. When reallocated strategically, that shift directly impacts profitability, compliance capacity, and customer response speed.

| Metric | Before AI-OCR | After AI-OCR |

|---|---|---|

| Annual manual processing time | Tens of thousands of hours | Reduced by up to 60,000 hours |

| Monthly document requests | Human-dependent | Up to 1.76 million automated |

| Error handling | Manual re-check cycles | High-accuracy AI + verification |

One documented case highlights automation of up to 1.76 million requests per month. At this scale, efficiency gains are not incremental but exponential. Human operators shift from repetitive transcription to exception management and decision-making.

Industry adoption spans logistics, finance, municipalities, manufacturing, construction, and HR. For example, logistics firms automate delivery slips and shipment instructions, while financial institutions process transaction forms and identity documents with high-precision recognition to mitigate compliance risks.

According to industry reports covering AI-OCR deployment case studies, the measurable benefits extend beyond labor reduction. Error rates decrease, processing lead times shrink, and entire workflows are redesigned. In many enterprises, AI-OCR becomes the trigger for broader digital transformation.

The economic signal is equally clear. Japan’s OCR solution market reached 53.27 billion yen in fiscal 2022, growing at over 106% year-on-year. Continued expansion indicates sustained enterprise investment rather than short-term experimentation.

Importantly, enterprises do not eliminate human oversight entirely. With AI-OCR accuracy reaching extremely high levels when combined with operator double-checking, companies adopt a hybrid governance model. Humans supervise edge cases while AI handles the bulk flow.

When viewed strategically, saving 60,000 work hours annually is not merely a cost reduction story. It represents reclaimed organizational capacity. That capacity can be reinvested into analytics, customer engagement, product innovation, or regulatory resilience.

In an economy defined by demographic decline and competitive digital pressure, AI-OCR has become an invisible productivity engine. Enterprises that deploy it at scale are not just working faster. They are redesigning how work itself is structured.

Cultural Preservation: AI Decoding Historical Kuzushiji Manuscripts

Kuzushiji, the cursive script used in pre-modern Japanese manuscripts, has long been a formidable barrier even for native readers. Unlike contemporary Japanese, a single syllable could be written in multiple stylistic variants, and characters were often heavily abbreviated. As a result, vast archives from the Edo period and earlier have remained accessible only to trained specialists.

In 2026, AI-powered Kuzushiji OCR is beginning to change that reality. By combining large-scale character shape databases with vision-language models, researchers are enabling machines to identify not only individual glyphs but also their semantic context within historical documents.

Kuzushiji OCR is not merely digitizing the past; it is transforming unreadable archives into searchable, analyzable cultural data.

According to reports on collaborative research by Kumamoto University and TOPPAN, AI-OCR has been applied to large collections of historical documents to extract disaster-related records. These decoded materials are now informing modern disaster prevention planning, demonstrating a direct bridge between early modern documentation and contemporary public policy.

The technical foundation differs significantly from standard modern OCR. Instead of relying solely on fixed character templates, Kuzushiji AI systems perform high-speed similarity searches across massive glyph datasets and refine results through iterative human feedback.

| Challenge | AI Approach | Impact |

|---|---|---|

| Multiple variants per syllable | Large-scale glyph similarity matching | Improved candidate accuracy |

| Severe cursive deformation | Vision-language contextual inference | Reduced misclassification |

| Unknown characters | Human-in-the-loop re-training cycle | Progressive accuracy gains |

As Kyushu University materials on Kuzushiji AI-OCR explain, systems are designed to learn continuously. When the AI fails to recognize a character, experts correct it, feeding the annotation back into the model. This re-learning cycle gradually expands recognition coverage and reduces ambiguity over time.

Importantly, scholars such as Yasuhiro Kondo have cautioned against both overestimating and underestimating AI’s capabilities in classical text recognition. AI excels at large-scale pattern processing, but paleographic nuance and historical interpretation still require domain expertise. The most effective model in 2026 is a collaborative one, where AI accelerates discovery and human scholars validate meaning.

The implications extend beyond academia. Once digitized and structured, Kuzushiji manuscripts become searchable corpora. Researchers can trace linguistic shifts, merchants can study historical trade records, and local governments can rediscover regional chronicles previously locked in archives.

For gadget enthusiasts and technologists, this represents the frontier of OCR evolution. The same advances in VLM and on-device processing that power real-time translation are now unlocking centuries-old handwriting. Cultural preservation is no longer a passive act of storage; it is an active process of computational decoding.

In this sense, AI is functioning as a time-travel interface. By converting fragile manuscripts into structured digital knowledge, Kuzushiji OCR ensures that historical memory is not only preserved, but computationally alive for future generations.

Apple Live Text vs Google Lens: Platform-Level AI Competition in 2026

In 2026, the competition between Apple Live Text and Google Lens is no longer about simple text extraction. It has evolved into a platform-level AI battle, where OCR acts as the gateway to broader ecosystems powered by on-device intelligence and vision-language models.

The real difference lies in how deeply each solution is embedded into its operating system and knowledge infrastructure. Both leverage advanced VLM capabilities, but their strategic directions clearly diverge.

| Aspect | Apple Live Text | Google Lens |

|---|---|---|

| Core Strength | Deep iOS integration | Search-driven intelligence |

| AI Processing | Primarily on-device | Hybrid (device + cloud) |

| Language Support | Strong multilingual support | 103+ languages via Google Translate |

| Extended Functions | System-wide actions via Siri | Search, homework help, object recognition |

Apple’s approach centers on seamless OS-level orchestration. With the expansion of Apple Intelligence and the evolution of Siri in 2026, Live Text does more than recognize characters on screen. It understands context across apps. Users can scan a bill and instruct Siri to summarize or initiate payment, all within a privacy-first, on-device framework. As industry observers such as Watch Impress have noted, Apple consistently prioritizes minimizing cloud dependency to reinforce user trust.

Google Lens, by contrast, extends OCR into the realm of real-time knowledge augmentation. Backed by Google’s search index and translation infrastructure, Lens transforms visual input into actionable queries. According to comparative analyses such as Beebom’s detailed breakdown, Lens excels in multilingual translation, supporting over 103 languages through Google Translate. It also interprets math equations and scientific diagrams step by step, positioning itself as a learning assistant rather than merely a text recognizer.

This reflects a philosophical divide: Apple optimizes for controlled, private, device-centric intelligence, while Google optimizes for expansive, web-connected intelligence.

Performance-wise, both platforms benefit from the 2026 shift toward high-performance NPUs and lightweight VLMs. On-device processing dramatically reduces latency, enabling near-instant recognition within camera previews. However, Google’s hybrid architecture allows deeper web-linked enrichment, whereas Apple’s tighter vertical integration ensures consistency across iOS, iPadOS, and macOS.

For gadget enthusiasts, the choice increasingly depends on ecosystem alignment. If you value frictionless system automation and privacy-centric processing, Live Text feels like an invisible layer of intelligence embedded throughout the OS. If you prefer expansive search capabilities and academic assistance powered by global data, Google Lens delivers unmatched breadth.

In 2026, OCR is no longer a standalone feature. It is the strategic front line in a broader AI platform war, where vision, language, privacy, and search converge into competing definitions of what “seeing” with AI truly means.

Accuracy, Risk, and the 0.1% Problem in High-Stakes Documents

AI-OCR accuracy in 2026 is often described as “99.9%.” In daily note-taking or receipt scanning, that number feels almost perfect. However, in high-stakes documents such as financial contracts, bank transfer forms, medical records, and government filings, the remaining 0.1% cannot be dismissed as negligible.

According to industry comparisons of invoice processing systems in 2026, combining AI-OCR with human double-check workflows can reach accuracy levels close to 99.9%. Yet even at that level, one error in a thousand fields may still occur. When a single digit in a bank account number or tax ID is misrecognized, the downstream impact can include compliance violations, financial loss, or legal disputes.

The difference between general-purpose OCR and mission-critical OCR becomes clear when we quantify exposure. Consider the following simplified risk perspective.

| Scenario | Fields Processed | Expected Errors at 99.9% |

|---|---|---|

| Small legal contract batch | 1,000 fields | ≈1 potential error |

| Mid-size bank daily processing | 100,000 fields | ≈100 potential errors |

| National-scale public filings | 1,000,000+ fields | 1,000+ potential errors |

In financial institutions such as major Japanese banks, where strict compliance and audit trails are mandatory, even a single misread character can trigger internal investigations. As reported in case studies of AI-OCR adoption in the financial sector, high-precision recognition is valued not only for efficiency but for risk avoidance and regulatory adherence.

The root of the 0.1% problem lies in edge cases. Vision Language Models significantly reduce ambiguity by leveraging context, distinguishing “0” from “O” or interpreting mixed Japanese scripts correctly. However, edge cases still arise in low-quality scans, handwritten annotations, unusual layouts, or rare proper nouns that fall outside dominant training distributions.

Cybersecurity experts in 2026 also emphasize that as AI agents increasingly act autonomously, governance frameworks such as DSPM and identity security controls become essential. If an AI system automatically extracts and forwards data from a contract, an OCR misrecognition can propagate instantly across systems. The speed of automation amplifies both productivity and error impact.

This is why high-stakes deployments rarely rely on raw OCR output alone. Instead, they implement layered safeguards: confidence scoring thresholds, exception routing, human-in-the-loop validation, and immutable audit logs. When confidence falls below a predefined level, the document is flagged rather than silently processed.

Invisible AI, as discussed in 2026 technology trend analyses, aims to operate seamlessly in the background. Yet in critical documentation workflows, invisibility must not mean opacity. Decision traceability, explainable confidence metrics, and clear responsibility boundaries between AI and human operators are increasingly treated as non-negotiable design principles.

Ultimately, the 0.1% problem reframes the conversation around accuracy. It is no longer about achieving near-perfection in laboratory benchmarks. It is about building resilient systems that assume imperfection and contain it. In high-stakes documents, true reliability is measured not only by how often the AI is correct, but by how safely the system behaves when it is not.

Rising Memory Costs, AI Security, and the Next Bottlenecks

As mobile OCR reaches near-perfect accuracy in 2026, the real constraints are no longer recognition rates but infrastructure pressure and systemic risk. The spotlight is shifting toward memory costs, AI security governance, and emerging performance bottlenecks that could shape the next phase of on-device intelligence.

When AI becomes invisible infrastructure, hardware economics and security architecture become decisive competitive factors.

One of the most immediate concerns is rising memory prices. According to industry analysis reported by Watch Impress, the explosive demand for AI data centers has tightened global RAM supply. High-capacity memory is essential for running lightweight VLMs and on-device inference engines smoothly. As models grow more context-aware, memory footprint increases accordingly.

If consumer devices require larger RAM configurations to support advanced OCR and AI features, two consequences emerge: higher retail prices and potential segmentation between premium and mid-range devices. This creates a structural bottleneck where cutting-edge AI becomes hardware-gated rather than universally accessible.

| Constraint | Cause | Impact on Users |

|---|---|---|

| Rising RAM prices | AI data center demand surge | Higher device costs |

| Model memory footprint | Context-aware VLM expansion | Performance tier gaps |

| Edge processing limits | Thermal and power ceilings | Throttling under heavy tasks |

Security introduces an even more complex challenge. As CTC’s 2026 cybersecurity outlook highlights, AI agents increasingly act autonomously, accessing documents, interpreting sensitive data, and triggering workflows. OCR systems now process contracts, IDs, medical forms, and financial statements directly on-device.

This evolution demands stronger Data Security Posture Management and AI-specific identity governance. It is no longer sufficient to secure storage. Systems must monitor how AI interprets, transforms, and transmits extracted data. A single misconfigured permission could allow automated agents to expose confidential information at machine speed.

Another emerging bottleneck is computational sustainability. On-device AI reduces cloud dependency, but it shifts energy and thermal loads to smartphones and wearables. Extended real-time translation or document parsing sessions can trigger throttling, especially in compact smart glasses. Efficient model quantization and memory optimization are becoming strategic priorities rather than engineering refinements.

According to broader IT trend analyses for 2026, customer experience now depends on seamless AI responsiveness. Any delay caused by memory swapping, overheating, or security verification friction degrades perceived intelligence. Users expect instantaneous interpretation. Even milliseconds matter when AI overlays information in augmented reality.

The paradox is clear. OCR has become technically mature, yet the surrounding ecosystem grows more fragile. Memory markets fluctuate, AI agents gain autonomy, and edge hardware approaches physical limits.

The next bottleneck will not be recognition accuracy. It will be how efficiently, securely, and sustainably that intelligence operates within constrained silicon.

参考文献

- CambioML Blog:ビジョン言語モデル:OCRの限界を超えて

- ITmedia Mobile:「iPhone 17 Pro」で進化した望遠カメラの実力は? 「Pixel 10 Pro」…

- Watch Impress:2026年は「AIとデバイス」の年に 今年の流れを予測する【西田宗千佳】

- Call Center Navi:【活用事例】AI-OCR導入で業務効率化に成功した企業の成功事例

- AIsmiley:熊本大学とTOPPAN、くずし字AI-OCRを活用した独自解読手法を開発

- Beebom:Apple Live Text vs Google Lens: Detailed Comparison

- CTC Key Technology:2026年に注目すべきサイバーセキュリティ技術動向