Have you ever tried to scan an important document with your smartphone, only to find your own shadow ruining the result? For years, shadows have been the invisible enemy of mobile document scanning, degrading readability and significantly lowering OCR accuracy.

In 2026, that frustration is rapidly disappearing thanks to breakthroughs in diffusion models, transformer-based vision systems, and next-generation mobile NPUs. Modern apps no longer just brighten dark areas. They estimate and reconstruct the hidden texture and text beneath shadows, delivering results that look as if they were captured under perfectly controlled lighting.

In this article, you will explore how leading apps such as vFlat, Google Drive, and Adobe Scan leverage generative AI, how iPhone 17 Pro and Android 16 hardware reshape the scanning experience, and why OCR benchmarks now exceed 96% accuracy with AI preprocessing. If you care about gadgets, AI, and the real-world impact of computational photography, this deep dive will show you how shadow removal became a cornerstone of digital transformation.

- Why Shadows Were the Biggest Bottleneck in Mobile Document Scanning

- From Simple Binarization to Diffusion Models: The Evolution of Shadow Removal Algorithms

- DocShaDiffusion and Latent-Space AI: How Modern Models Reconstruct Hidden Text

- NTIRE 2025 Challenge: What Competitive Benchmarks Reveal About State-of-the-Art Shadow Removal

- App Showdown 2026: vFlat, Google Drive, Adobe Scan, Genius Scan, and Docutain

- The Shutdown of Microsoft Lens and the Shift Toward AI-Centric Scanning Ecosystems

- iPhone 17 Pro, Android 16, and the Rise of Hybrid Auto-Exposure and On-Device NPU Processing

- OCR Accuracy in 2025–2026: Statistical Evidence Linking AI Preprocessing and 96%+ Recognition Rates

- Japan’s Unique Scanning Culture: Books, Manga, and Compact Living Spaces Driving Innovation

- Lighting Hardware Trends in 2026: MagSafe LEDs, Ring Lights, and Physical Shadow Control

- Market Growth and Enterprise Demand: The Expanding Global and Japanese Document Scanning Industry

- What Comes Next: Real-Time Live Shadow Removal, Generative Re-Rendering, and Spatial Computing Integration

- 参考文献

Why Shadows Were the Biggest Bottleneck in Mobile Document Scanning

For years, the biggest limitation in mobile document scanning was not resolution, sensor size, or even OCR performance. It was shadows.

When users tried to scan a receipt, a contract, or a book page with a smartphone, the device itself often blocked ambient light. Hands, camera bumps, and uneven desk lighting created dark regions that were far more than cosmetic defects.

Shadows fundamentally corrupted the information layer of the document. They reduced contrast, distorted color balance, and obscured fine strokes that OCR engines rely on for character recognition.

How Shadows Broke the Scanning Pipeline

| Stage | Impact of Shadows | Result |

|---|---|---|

| Image Capture | Uneven exposure, clipped highlights, crushed blacks | Loss of visible detail |

| Preprocessing | Failed binarization, inaccurate edge detection | Distorted page structure |

| OCR | Broken character strokes, merged glyphs | Recognition errors |

Before deep learning-based correction became mainstream, most apps relied on thresholding and contrast stretching. These pixel-level techniques could brighten dark regions, but they could not reconstruct text hidden under dense shadows.

As benchmark data summarized in 2025 OCR accuracy analyses shows, documents with shadows but without AI preprocessing often achieved only 65% to 75% recognition accuracy. That is a catastrophic failure rate in business workflows.

Even by 2025–2026, shadowed documents without dedicated AI removal still hover around 70% to 80% accuracy, while AI shadow removal can raise this to 95% to 97.5%. The bottleneck was never the OCR model alone. It was the lighting artifact introduced at capture.

In practical terms, a shadow could turn a legally binding number like “8” into “3,” or erase a decimal point entirely.

Researchers presenting at CVPR workshops such as the NTIRE 2025 Image Shadow Removal Challenge emphasized that document shadows are especially complex because they are not uniform. They include soft gradients, hard directional shadows, and self-shadowing near page folds.

Unlike natural photography, document scanning demands geometric and tonal consistency. A slight luminance gradient across a white page disrupts binarization, which classical OCR pipelines depend on.

Moreover, shadows interact with compression artifacts and sensor noise. When dark regions are amplified during post-processing, noise is amplified as well. This introduces artificial textures that OCR systems may misinterpret as punctuation or diacritics.

According to industry commentary around digital transformation initiatives, near-perfect OCR—approaching 99.9%—is required to eliminate manual verification. Shadow-induced ambiguity was therefore not a minor annoyance but a structural barrier to full automation.

In short, while camera megapixels improved and neural OCR models became transformer-based and context-aware, the capture-stage shadow problem quietly dictated the ceiling of achievable accuracy. Until shadows could be physically minimized or computationally reconstructed, mobile document scanning could never be truly reliable.

From Simple Binarization to Diffusion Models: The Evolution of Shadow Removal Algorithms

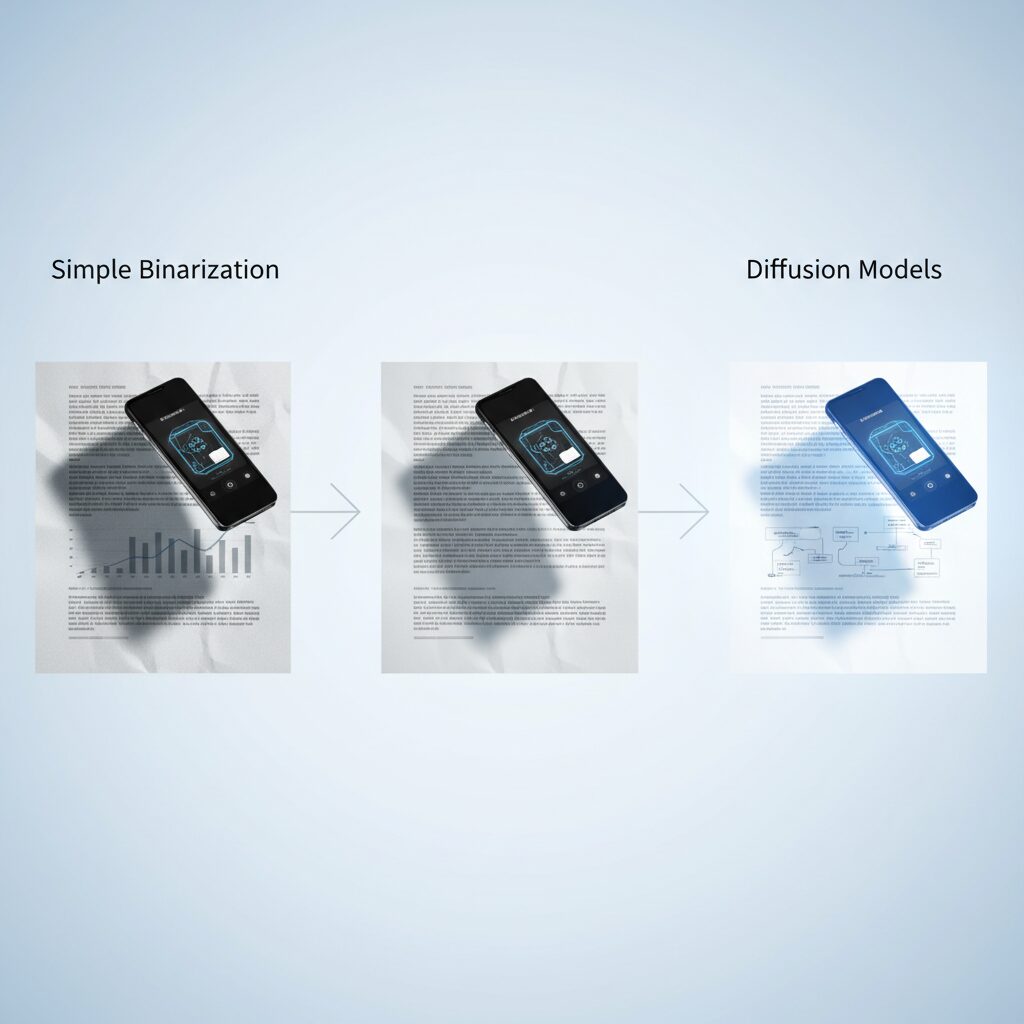

In the early days of smartphone document scanning, shadow removal relied on extremely simple pixel-level tricks. Algorithms primarily applied binarization, adaptive thresholding, and contrast stretching to force text into black and backgrounds into white. While this approach sometimes improved readability, it often destroyed subtle textures and failed completely under strong, uneven shadows.

These rule-based methods treated shadows as mere brightness differences. They did not “understand” illumination or document structure. As a result, hard shadows caused by fingers or book curvature frequently led to broken characters, merged strokes, and significant OCR degradation.

The first real leap came with learning-based segmentation and GAN-based restoration. Instead of blindly adjusting pixels, models began detecting shadow regions explicitly and reconstructing them using adversarial training. GANs generated plausible shadow-free images, improving visual consistency compared to classical filters.

| Generation | Core Technique | Main Limitation |

|---|---|---|

| Early 2010s | Binarization / Thresholding | Loss of texture and detail |

| Late 2010s | GAN-based restoration | Artifacts near shadow boundaries |

| Mid-2020s | Transformer & Diffusion models | High computational cost |

However, GAN-based systems often struggled with stability and edge artifacts. Shadow boundaries could appear unnatural, especially when illumination gradients were complex. According to research presented at CVPR workshops and related benchmarks such as NTIRE 2025, these artifacts became a measurable bottleneck in high-resolution document restoration tasks.

The next paradigm shift emerged with transformer architectures. By modeling long-range dependencies, transformers enabled algorithms to reference non-shadow regions across the entire page. This global context modeling significantly improved illumination consistency, particularly in documents with large soft shadows or uneven lighting.

The most transformative development, though, has been the adoption of diffusion models. Rather than generating a corrected image in one pass, diffusion models iteratively denoise from a learned distribution. Research such as DocShaDiffusion on arXiv demonstrates how operating in latent space allows selective shadow-focused restoration while preserving non-shadow regions.

Diffusion-based shadow removal does not merely brighten dark areas; it reconstructs lost information step by step. Modules like soft-mask generation guide the denoising process, ensuring that only shadow regions are modified. This dramatically reduces color shifts and texture inconsistencies.

Benchmarks referenced in NTIRE 2025 reports show that diffusion-driven approaches outperform earlier GAN systems on complex datasets containing self-shadows and irregular projections. The improvement is particularly visible in fine typography and thin strokes, where earlier methods often failed.

What makes this evolution remarkable is not just visual quality, but semantic awareness. Modern systems implicitly learn document structure—text lines, margins, background tone—allowing them to infer what “should” exist beneath a shadow. This represents a transition from pixel correction to probabilistic reconstruction grounded in learned priors.

In practical terms, the journey from simple binarization to diffusion models reflects a broader shift in computer vision: from deterministic image processing to generative modeling. Shadow removal has evolved from contrast adjustment to intelligent restoration, laying the technical foundation for today’s high-precision mobile document workflows.

DocShaDiffusion and Latent-Space AI: How Modern Models Reconstruct Hidden Text

DocShaDiffusion represents a structural shift in how document shadow removal is approached. Instead of editing pixels directly, it operates in latent space, where images are compressed into abstract feature representations before reconstruction. According to the arXiv paper introducing DocShaDiffusion in 2025, this strategy allows the model to focus on semantic structure such as characters, paper texture, and layout rather than surface brightness alone.

This latent-space strategy is crucial because shadows do not merely darken pixels—they obscure information. Traditional CNN or GAN-based methods often brighten shadowed regions, but they struggle when characters are partially lost. Diffusion models, by contrast, progressively denoise a representation, enabling controlled reconstruction guided by contextual cues.

Core Architecture of DocShaDiffusion

| Module | Function | Impact on Reconstruction |

|---|---|---|

| SSGM | Generates soft shadow masks | Localizes restoration to shadow regions |

| SMGDM | Mask-guided diffusion control | Preserves non-shadow texture fidelity |

The Shadow Soft-mask Generation Module (SSGM) estimates shadow intensity as a gradient rather than a binary boundary. This matters because document shadows are rarely uniform. By modeling soft transitions, the system avoids harsh correction edges that plagued earlier GAN pipelines.

The Shadow Mask-aware Guided Diffusion Module (SMGDM) then constrains the denoising trajectory. In diffusion models, images are reconstructed step by step from noise. By injecting mask awareness at each step, DocShaDiffusion restores hidden text while leaving well-lit areas untouched. Research from Adobe and related latent-guided diffusion studies supports this principle: conditioning diffusion with structural priors significantly improves fidelity in restoration tasks.

This distinction becomes especially important for high-contrast “hard shadows.” In benchmark discussions surrounding NTIRE 2025 shadow removal challenges, top-performing systems increasingly relied on geometry-aware or diffusion-based reasoning to handle self-shadows and irregular projections. Latent diffusion aligns with this trend because it integrates global context during reconstruction.

Compared with GAN-based systems, diffusion models require more computation, but they offer superior stability and fewer boundary artifacts. Transformer-based approaches excel at global context modeling, yet diffusion adds iterative refinement, which is critical when reconstructing partially occluded characters.

From an OCR perspective, the implications are measurable. Recent OCR benchmark analyses in 2025–2026 show that AI-driven preprocessing—including advanced shadow removal—pushes recognition accuracy into the 95–97% range for previously shadowed documents. That improvement is not just cosmetic; it directly reduces downstream correction costs.

In practical deployment, modern smartphones increasingly offload heavy diffusion steps to optimized NPUs or hybrid cloud pipelines. As mobile silicon improves, latent-space reconstruction is moving closer to real-time execution. What once required server-grade GPUs is gradually becoming feasible on edge devices.

DocShaDiffusion therefore signals more than an algorithmic upgrade. It reflects a broader paradigm: document scanning is evolving from image enhancement to probabilistic content reconstruction. By reasoning in latent space, modern AI systems do not merely remove shadows—they infer what was hidden beneath them and rebuild it with structural coherence.

NTIRE 2025 Challenge: What Competitive Benchmarks Reveal About State-of-the-Art Shadow Removal

The NTIRE 2025 Image Shadow Removal Challenge, held in conjunction with CVPR 2025, has become a defining benchmark for the state of the art in shadow removal. With more than 300 participants competing on the WSRD+ dataset, the challenge did not merely rank models—it revealed where the field is structurally heading.

Unlike earlier benchmarks focused on relatively simple cast shadows, WSRD+ incorporates self-shadows, irregular object boundaries, and complex illumination transitions. This design forces algorithms to move beyond superficial brightness correction toward physically consistent reconstruction.

The top-performing teams, including X-Shadow and CV SVNIT, integrated geometric priors such as depth and surface normals into their pipelines. According to the official CVPR workshop report, these additional cues enabled models to distinguish between illumination change and intrinsic texture variation with far greater reliability.

This shift is critical. In document or real-world scanning scenarios, a model must avoid “over-cleaning” textured regions while still neutralizing illumination artifacts. The leading NTIRE solutions achieved this by fusing multi-branch architectures that separately reason about structure and appearance before recombining them.

| Aspect | Earlier Approaches | NTIRE 2025 Top Methods |

|---|---|---|

| Shadow Modeling | Implicit, pixel-level adjustment | Explicit mask + geometry-aware modeling |

| Context Usage | Local neighborhoods | Global semantic context |

| Complex Self-Shadows | Often unstable | Physically consistent reconstruction |

Another notable trend is the dominance of diffusion-inspired and transformer-augmented pipelines. While GAN-based systems previously led many restoration tasks, NTIRE 2025 results indicate that staged denoising processes and long-range attention mechanisms produce cleaner shadow boundaries and fewer halo artifacts.

Importantly, the evaluation metrics in NTIRE 2025 go beyond simple PSNR gains. Structural similarity and perceptual fidelity play a central role, reflecting a broader industry demand: outputs must look natural to humans, not just score well numerically.

For practitioners and product developers, the implication is clear. Competitive benchmarks now reward models that integrate illumination physics, global reasoning, and fine-grained masking in a unified framework. Incremental brightness tuning is no longer competitive.

NTIRE 2025 therefore serves as more than a leaderboard—it acts as a roadmap. The convergence of geometry, generative modeling, and contextual reasoning signals that future shadow removal systems will increasingly resemble holistic scene reconstruction engines rather than image filters.

App Showdown 2026: vFlat, Google Drive, Adobe Scan, Genius Scan, and Docutain

In 2026, document scanning apps no longer compete on basic cropping or PDF export. The real battlefield is AI-powered shadow removal, generative restoration, and OCR synergy. If you care about clean data, not just clean images, the differences between vFlat, Google Drive, Adobe Scan, Genius Scan, and Docutain are more strategic than they first appear.

| App | Core Shadow Tech | Best For |

|---|---|---|

| vFlat | Curvature + Finger Removal AI | Books, manga, bound materials |

| Google Drive | Shadow Erasing (Magic Eraser-based) | Quick Android workflows |

| Adobe Scan | Firefly Generative Removal | Business-grade restoration |

| Genius Scan | Smart filtering engine | Readable, lightweight scans |

| Docutain | Error-reduction + wrinkle cleaning | Lens replacement users |

vFlat remains unmatched for book scanning. Its AI detects page curvature and finger occlusion, then reconstructs hidden text using surrounding visual data. For users digitizing non-destructive bound books, this is transformative. The ability to flatten two-page spreads while suppressing central gutter shadows makes it uniquely optimized for “self-scanning” culture.

Google Drive, redesigned in late 2025, brings Shadow Erasing directly into Android’s default ecosystem. Leveraging segmentation technology refined in Google Photos, it identifies shadow regions immediately after capture. One tap equalizes illumination, making it ideal for fast receipt uploads and cloud-first workflows.

Adobe Scan pushes the boundary with generative AI. Instead of merely brightening dark areas, its Firefly-powered removal attempts to reconstruct missing characters inside hard shadows. In difficult lighting conditions, this contextual regeneration significantly improves downstream OCR reliability. According to 2025–2026 benchmark analyses, AI-preprocessed documents can reach 95–97.5% OCR accuracy even when shadows are present, a major leap from earlier generations.

Genius Scan takes a different approach. Its strength lies in smart filtering and readability enhancement rather than aggressive generative reconstruction. The result is clean, contrast-optimized documents with minimal processing latency. For professionals who prioritize speed and consistent legibility over experimental AI reconstruction, it remains dependable.

Docutain, increasingly adopted after Microsoft Lens announced its shutdown effective March 2026, emphasizes error reduction and wrinkle correction. Its filtering engine is particularly effective at cleaning uneven lighting and paper creases, making it a practical alternative for enterprise users migrating from Lens-based workflows.

For power users, the choice depends on intent. Archiving books favors vFlat. Cloud-native Android workflows favor Google Drive. Legal and financial document cleanup favors Adobe Scan. Lightweight clarity favors Genius Scan. Structured enterprise migration favors Docutain.

In an era where OCR accuracy directly impacts automation, shadow removal is no longer cosmetic. It is infrastructure.

The Shutdown of Microsoft Lens and the Shift Toward AI-Centric Scanning Ecosystems

The shutdown of Microsoft Lens marks a symbolic turning point in mobile document scanning. For more than a decade, Lens was a gateway tool for business users who wanted quick shadow cleanup, whiteboard enhancement, and seamless export to the Microsoft ecosystem.

In September 2025, Microsoft announced that Lens would be discontinued, with full support ending on March 9, 2026. According to coverage by PCMag and ZDNET, users were given only a limited transition window to migrate stored scans and workflows to alternative platforms.

This was not merely the retirement of an app. It signaled Microsoft’s strategic pivot toward AI-centric productivity built around Copilot.

| Aspect | Microsoft Lens (Before 2026) | Post-Shutdown Direction |

|---|---|---|

| Core Value | Standalone scan & filter app | Integrated AI inside Copilot ecosystem |

| Shadow Handling | Magic-style filters | AI-driven contextual processing |

| User Workflow | Manual capture → export | Capture → semantic understanding → automation |

Lens originally gained popularity because of its “magic” filters that reduced glare and shadows on whiteboards and paper documents. However, its architecture was rooted in enhancement, not understanding. It optimized pixels, but it did not deeply interpret content.

By 2026, that limitation became strategically significant. Modern scanning ecosystems no longer stop at image cleanup. They extract structured data, classify documents, trigger workflows, and integrate with cloud-based document AI systems.

Google Cloud’s Document AI release trajectory illustrates this shift clearly. Scanning is now positioned as an entry point into automated data pipelines rather than a final output step.

After Lens’s discontinuation, users migrated primarily to Adobe Scan, Google Drive’s revamped scanner, and Docutain. The transition reflects a broader market consolidation around platforms that combine on-device AI with cloud-scale processing.

Adobe integrates generative AI technologies derived from Firefly to reconstruct missing information. Google embeds segmentation and shadow erasing directly into Android’s scanning workflow. These ecosystems are tightly coupled with storage, collaboration, and automation layers.

Market data from Data Insights Reports projects the global document scanning services market to reach approximately 4.5 billion USD in 2026, growing at a CAGR of 9.6%. This growth is fueled not by standalone apps, but by enterprise digital transformation demand.

Microsoft’s decision therefore aligns with a macro trend. Rather than maintaining a separate scanning utility, the company is reallocating resources toward AI agents that interpret documents, summarize them, and generate actions inside productivity suites.

For power users, the implication is profound. The scanning app is no longer the product. It is a sensor feeding an AI ecosystem. Shadow removal, OCR, and formatting are assumed capabilities.

The real differentiation now lies in orchestration—how captured information flows into analytics, compliance systems, and autonomous workflows.

The shutdown of Microsoft Lens thus represents the end of the enhancement era and the acceleration of AI-centric scanning ecosystems. In 2026, scanning is no longer about cleaning documents. It is about activating them.

iPhone 17 Pro, Android 16, and the Rise of Hybrid Auto-Exposure and On-Device NPU Processing

The evolution of document scanning in 2026 is no longer just about better algorithms. It is about how flagship hardware such as iPhone 17 Pro and Android 16 devices fuse imaging pipelines with on-device NPUs to execute shadow removal and exposure control in real time.

At the core of this shift is a new generation of hybrid auto-exposure systems, tightly integrated with neural processing units. Instead of choosing a single exposure value, devices now evaluate bright paper regions and deep shadows simultaneously, then synthesize an optimized composite before the image is even saved.

According to reports on Android 16 feature development, the imaging stack now supports multi-frame exposure fusion combined with AI-based white balance estimation. This allows the camera to preserve highlight detail in white paper while lifting underexposed shadow regions without amplifying noise.

Apple’s iPhone 17 Pro introduces a redesigned camera layout, relocating the flash and LiDAR module. Industry analysis notes that this change improves lighting uniformity in close-range capture scenarios, particularly during macro-style document scanning.

| Feature | iPhone 17 Pro | Android 16 Flagships |

|---|---|---|

| Auto-Exposure | Multi-frame fusion via ISP | Hybrid exposure + AI weighting |

| On-device AI | Neural Engine acceleration | Dedicated NPU pipelines |

| Shadow Handling | Pre-capture tonal balancing | Real-time segmentation + fusion |

The real breakthrough lies in computation happening locally. Diffusion-based shadow removal models, such as those discussed in recent AAAI and arXiv publications, traditionally required heavy processing. In 2026, optimized variants run partially or fully on mobile NPUs, reducing reliance on cloud inference.

This architectural shift delivers two key benefits. First, latency drops dramatically, enabling near-instant preview correction. Second, privacy improves because sensitive business documents no longer need to leave the device for enhancement.

Benchmark trends in OCR performance further validate this approach. Recent 2025–2026 accuracy studies show that AI-preprocessed documents with advanced shadow removal reach 95% to 97.5% recognition accuracy under shadowed conditions, compared with as low as 70% to 80% without preprocessing.

Hybrid exposure plays a crucial role here. By preserving subtle stroke contrast before OCR even begins, the system reduces the need for aggressive post-capture reconstruction. This synergy between ISP hardware and NPU inference is what defines the current generation.

Importantly, these improvements are not limited to static correction. Emerging “live shadow balancing” previews allow users to see flattened lighting in the viewfinder itself. Analysts covering next-generation OCR technologies suggest that this real-time capability will become standard as mobile NPUs continue scaling in throughput.

In practical terms, this means fewer retakes, higher OCR trust levels, and smoother enterprise workflows. The rise of hybrid auto-exposure combined with on-device neural acceleration marks a turning point where hardware and AI no longer operate sequentially, but collaboratively, redefining what smartphone scanning can achieve.

OCR Accuracy in 2025–2026: Statistical Evidence Linking AI Preprocessing and 96%+ Recognition Rates

In 2025–2026, OCR accuracy is no longer limited by the recognition engine alone. It is increasingly determined by how intelligently the image is preprocessed before text extraction begins. Recent benchmark data clearly shows that AI-driven preprocessing—especially shadow removal and geometric correction—directly correlates with 96%+ recognition rates in real-world scenarios.

According to the 2025 OCR Accuracy Benchmark analysis published by Sparkco, documents processed with AI-based preprocessing pipelines improved average recognition rates by approximately five percentage points compared to 2023 systems. The difference becomes dramatic when shadows are present.

| Condition | OCR Accuracy (2023) | OCR Accuracy (2025–2026) |

|---|---|---|

| Printed, no shadow | 95.0% | 98.5% |

| Shadow present, no AI preprocessing | 65–75% | 70–80% |

| Shadow present, AI shadow removal applied | 85–92% | 95–97.5% |

| Handwritten documents | 72% | 90% |

The most striking insight is the third row. When diffusion-based shadow removal and advanced denoising are applied before OCR, recognition accuracy jumps into the 95–97.5% range. This is not a marginal gain—it represents the difference between manual verification and near-full automation.

Research presented at AAAI and arXiv on diffusion-based shadow removal models demonstrates why this improvement occurs. Traditional binarization simply increases contrast. Modern latent-space diffusion models, however, reconstruct obscured strokes and restore background uniformity before text segmentation even begins. As a result, character boundaries become statistically cleaner, reducing substitution and deletion errors.

VAO AI’s 2026 OCR industry analysis further notes that when systems approach 99.9% accuracy, human validation becomes economically unnecessary for large-scale workflows. While most mobile pipelines are not yet consistently at 99.9%, crossing the 96% threshold already reduces manual correction costs by a substantial margin, especially in logistics, finance, and legal digitization.

Another key factor is contextual correction powered by large language models. For handwritten documents, recognition improved from 72% in 2023 to roughly 90% in 2025–2026 benchmarks. This gain is partly due to semantic post-processing that corrects ambiguous characters using surrounding text probability, compounding the benefits of visual cleanup.

In practical terms, the workflow now looks like this: capture → geometric flattening → shadow diffusion restoration → adaptive exposure normalization → transformer-based OCR → language-model correction. Each preprocessing stage reduces entropy in the input signal. The cleaner the visual field, the more confidently the recognition model converges.

The statistical evidence therefore supports a clear conclusion. OCR engines did not suddenly become 30% smarter. Instead, AI preprocessing eliminated the physical artifacts—shadows, skew, uneven illumination—that previously sabotaged recognition accuracy. In 2026, high-performance OCR is no longer just about reading text. It is about reconstructing the document so perfectly that recognition becomes almost trivial.

Japan’s Unique Scanning Culture: Books, Manga, and Compact Living Spaces Driving Innovation

Japan’s scanning culture has evolved in a uniquely distinctive direction, shaped by two powerful forces: a deep affection for printed books and manga, and the realities of compact urban living. In cities where storage space is limited and apartments are measured in square meters rather than rooms, digitization is not just convenient but practical.

Particularly symbolic is the practice known as “jisui,” in which readers digitize their own books for personal archives. Unlike Western markets where office documents dominate scanning use cases, in Japan it is often paperbacks, manga volumes, sheet music, and fan-made publications that are placed under the camera.

This cultural demand has directly influenced the direction of scanning innovation. Apps such as vFlat Scan gained strong traction in Japan precisely because they address non-destructive book scanning. According to its App Store listing and user reviews, features like page curvature correction and automatic finger removal are heavily valued by Japanese users who want to preserve physical books while eliminating shadows in the page gutter.

| Cultural Factor | Scanning Requirement | Innovation Triggered |

|---|---|---|

| Manga & paperbacks | Accurate gutter shadow removal | AI-based curvature flattening |

| Small apartments | Space-saving digitization | Mobile-first scanning workflows |

| Collector culture | Non-destructive scanning | Finger & hand shadow removal AI |

The Japanese housing environment further accelerates this trend. Real estate data consistently shows that urban units are compact, making large bookshelves impractical. As a result, scanning becomes a form of digital decluttering. This aligns with the broader expansion of Japan’s document scanning and enterprise content management markets, which industry analyses project to grow steadily through 2034.

Lighting accessories popular in Japan also reflect this spatial sensitivity. Foldable ring lights and compact panel lights rank highly in domestic electronics retailers, offering soft, even illumination that reduces physical shadows before software correction is applied. The preference is not for studio-scale equipment but for tools that can be deployed instantly on a small desk.

What makes Japan unique is the fusion of cultural preservation and technological experimentation. Readers want flawless digital copies of delicate manga prints, yet they also want to keep the original volumes intact. This tension pushes developers to refine AI shadow removal, page flattening, and texture reconstruction far beyond basic document scanning.

In effect, compact living spaces and a strong print culture have turned Japan into a living laboratory for mobile scanning innovation. The demand is not merely for clearer images, but for faithful digital surrogates of cherished physical media, produced effortlessly within the constraints of everyday life.

Lighting Hardware Trends in 2026: MagSafe LEDs, Ring Lights, and Physical Shadow Control

In 2026, lighting hardware for smartphone scanning is no longer a secondary accessory but a core performance factor. Even though AI-driven shadow removal has advanced dramatically, **physical light control still determines how much information the sensor can capture in the first place**.

According to major Japanese retailers such as Yodobashi Camera and Yamada Denki, compact LED lights and ring lights consistently rank among the top smartphone accessories. This trend reflects a simple reality: better input light reduces computational correction and improves OCR reliability.

Three hardware categories define the 2026 landscape.

| Category | Key Feature | Primary Benefit |

|---|---|---|

| MagSafe LED | Magnetic rear attachment | Coaxial lighting minimizes device shadow |

| Ring Light | 360° circular illumination | Uniform top-down document lighting |

| Panel / Square Light | Surface light diffusion | Softer shadows and reduced glare |

MagSafe-compatible LEDs such as the Ulanzi LM19 have become especially popular among iPhone users. By attaching directly to the rear magnet array, the light aligns closely with the camera’s optical axis. This configuration significantly reduces lateral shadows caused by hand positioning. In practical terms, it prevents the classic “crescent shadow” that appears when scanning receipts at close range.

Ring lights, long favored by content creators, have evolved into document-optimized tools. Modern models offer 10-step dimming and adjustable color temperature. This matters because hybrid auto-exposure systems in Android 16 and iOS 19 perform best when the illumination is stable and evenly distributed. **Consistent lighting reduces the burden on multi-frame exposure fusion algorithms**, resulting in cleaner whites and fewer post-processing artifacts.

Panel-style square LEDs address a different issue: micro-reflections on glossy paper. By spreading light across a wider surface, they soften specular highlights that can confuse OCR engines. As recent OCR benchmarks have shown, preprocessing quality can push recognition accuracy above 95% even in previously shadow-heavy documents. Hardware diffusion directly supports that pipeline.

Another notable shift in 2026 is the growing awareness of “physical shadow control.” Users are adjusting light angle, height, and diffusion before relying on AI fixes. This approach mirrors findings from computer vision research presented at CVPR workshops, where better illumination at capture consistently improves downstream restoration performance.

Interestingly, hardware design changes in flagship phones have also influenced accessory trends. With the iPhone 17 Pro relocating flash and LiDAR components, some existing scanning rigs lost compatibility. As a result, modular lighting systems and adjustable mounts are gaining traction among professionals who require predictable geometry.

The key insight for 2026 is clear: software can reconstruct, but hardware defines the ceiling of quality. By combining MagSafe-aligned LEDs, calibrated ring lights, or diffused panel sources with modern shadow-removal algorithms, users achieve near-uniform scans even in constrained desk environments.

For gadget enthusiasts, lighting is no longer an afterthought. It is a precision instrument that works in tandem with computational photography, transforming smartphones into controlled micro-studios capable of producing archive-grade document captures.

Market Growth and Enterprise Demand: The Expanding Global and Japanese Document Scanning Industry

The global document scanning industry is entering a new growth phase in 2026, driven not only by technological innovation but also by structural demand from enterprises undergoing digital transformation. According to Data Insights Reports, the global document scanning services market is estimated at approximately 4.5 billion USD in 2026 and is projected to expand at a CAGR of 9.6% through 2034. This steady growth reflects how scanning has shifted from a back-office utility to a strategic infrastructure layer.

In Japan, the market represents roughly 300 million USD within that global total, positioning the country as a high-value, regulation-driven segment. The revision of the Electronic Bookkeeping Act and stricter compliance requirements have accelerated the digitization of receipts, contracts, and accounting documents. As a result, demand is no longer limited to large enterprises; small and mid-sized businesses are actively investing in scanning workflows that integrate directly with cloud accounting and ERP systems.

| Region | 2026 Market Size | Growth Driver |

|---|---|---|

| Global | Approx. $4.5B | Enterprise DX & compliance |

| Japan | Approx. $0.3B | Regulatory reform & ECM adoption |

Enterprise Content Management adoption is also accelerating. IMARC Group notes that Japan’s ECM market is expanding in parallel, as companies seek integrated platforms that combine capture, indexing, storage, and AI-driven retrieval. In this context, document scanning is no longer an isolated service; it is the entry point into searchable, analyzable corporate knowledge.

Healthcare and legal sectors are emerging as the most aggressive adopters. Hospitals are digitizing patient records to improve interoperability, while legal departments require high-fidelity digital archives for contract lifecycle management. In both cases, accuracy and auditability are mission-critical, which increases demand for AI-enhanced preprocessing and validation.

Another notable trend is the shift from bulk outsourcing to hybrid models. Traditionally, enterprises relied on external scanning bureaus for large backlogs. In 2026, however, many organizations deploy in-house mobile capture solutions for daily operations while outsourcing only historical archives. This hybrid approach reduces turnaround time and strengthens data governance.

Google Cloud’s Document AI release notes highlight continuous enhancements in automated document classification and data extraction, reinforcing how cloud providers are embedding scanning into broader AI ecosystems. The market is therefore expanding not only in volume but in functional depth, with value increasingly tied to automation and analytics rather than simple image conversion.

The competitive landscape is shifting from hardware-centric vendors to AI-first platforms. Enterprises evaluate solutions based on OCR accuracy, API integration, and compliance readiness rather than scanner throughput alone. As OCR benchmarks in 2025–2026 approach near-perfect accuracy for clean documents, the differentiation lies in handling imperfect, real-world inputs at scale.

For Japanese corporations facing labor shortages and mounting compliance complexity, document scanning is becoming a productivity multiplier. What was once considered routine clerical work is now positioned as a foundational element of enterprise intelligence. This structural repositioning explains why the industry continues to expand even in mature markets, signaling sustained investment momentum well beyond 2026.

What Comes Next: Real-Time Live Shadow Removal, Generative Re-Rendering, and Spatial Computing Integration

The next frontier is no longer just removing shadows after capture, but eliminating them in real time while you frame the document.

As mobile NPUs continue to scale, live preview pipelines are beginning to integrate diffusion-based correction directly into the camera feed. According to recent OCR technology analyses in 2025, on-device acceleration is reducing multi-step enhancement latency from seconds to near-interactive ranges.

This paves the way for true live shadow removal, where users see a corrected, evenly lit document before they even press the shutter.

Technically, this requires a hybrid stack: ISP-level exposure fusion combined with lightweight shadow masks generated by transformer backbones, followed by accelerated denoising blocks distilled from larger diffusion models. Instead of full generative passes, edge-optimized variants focus only on detected shadow regions.

The result is not merely aesthetic improvement but capture certainty. Users can confirm readability instantly, reducing rescans and workflow friction in high-volume environments such as legal intake or hospital admissions.

| Capability | 2026 Status | Next Phase |

|---|---|---|

| Shadow Removal | Post-capture AI processing | Live preview correction |

| Text Recovery | Context-aware reconstruction | Full semantic re-rendering |

| Output Format | Enhanced image PDF | Structurally regenerated digital twin |

The more radical shift, however, lies in generative re-rendering. Rather than restoring pixels, systems increasingly reconstruct documents from meaning. As discussed in 2026 OCR benchmark commentary, once recognition confidence exceeds high thresholds, AI can regenerate clean vector text, standardized typography, and uniform backgrounds.

In this model, the camera becomes a semantic reader, not just an optical sensor. A shadowed, wrinkled receipt can be transformed into a structurally perfect digital document with corrected alignment and machine-readable fields.

This approach also strengthens compliance. Cleanly regenerated files reduce ambiguity in automated auditing pipelines, particularly in regions with strict electronic record regulations.

Spatial computing introduces yet another dimension. With devices like Vision Pro-class headsets, scanned documents are no longer confined to flat PDFs. They can exist as spatial objects anchored in 3D environments.

Here, shadow logic reverses. After removing real-world shadows for clarity, rendering engines may reintroduce synthetic shadows consistent with virtual light sources. Research in high-resolution mobile mapping and global shutter imaging highlights how depth and geometry data enable physically coherent scene integration.

The document evolves into a spatially aware digital twin, responsive to virtual lighting and perspective.

In practical terms, imagine reviewing a scanned contract in augmented space. The system captures it, removes physical lighting artifacts, reconstructs its structure, and then places it on a virtual desk where shadows behave naturally as you move around it.

This convergence of real-time correction, generative reconstruction, and spatial anchoring signals a shift from “better scans” to intelligent document environments.

The next phase of shadow removal is therefore not subtraction, but transformation.

参考文献

- AAAI Publications:Global-guided Diffusion Model for Shadow Removal

- arXiv:DocShaDiffusion: Diffusion Model in Latent Space for Document Image Shadow Removal

- CVF Open Access (CVPR 2025 Workshops):NTIRE 2025 Image Shadow Removal Challenge Report

- Google Workspace Updates Blog:Revamping the scanned documents editing experience in Google Drive on Android devices

- ZDNET:Want a Microsoft Lens alternative? 5 scanning apps you can try today

- Sparkco:2025 OCR Accuracy Benchmark Results: A Deep Dive Analysis

- Data Insights Reports:Opportunities in Global And Japan Document Scanning Services Market 2026-2034