Have you ever tried to capture a distant subject with your smartphone, only to end up with shaky, blurry results? In 2026, that frustration is rapidly disappearing. Telephoto performance and image stabilization have reached a level where even 10x or 30x zoom shots can look impressively sharp and usable.

Thanks to advances in metalens optics, periscope-based ALoP designs, next-generation sensor-shift OIS, and deep learning–powered video stabilization, smartphones are overcoming long-standing physical limits. AI-driven super resolution and diffusion upscaling now reconstruct lost detail in high-zoom shots, while high-precision IMU sensors feed real-time motion data to stabilization engines running on dedicated NPUs.

At the same time, the ecosystem around smartphones is evolving. DockKit-compatible AI gimbals, robotic camera concepts, and pro workflows like Log video and external SSD recording are transforming phones into fully integrated production systems. In this article, you will discover how hardware, AI, and accessories are converging in 2026 to deliver near “gimbal-like” stability and DSLR-class reach from a device that fits in your pocket.

- Why Telephoto and Stabilization Have Become the New Smartphone Battleground in 2026

- Breaking Optical Limits: Metalens Commercialization and Ultra-Thin Camera Modules

- ALoP and Advanced Periscope Systems: Achieving 10x+ Optical Zoom in Slim Bodies

- Liquid Lenses and Variable Aperture: Seamless Zoom from Macro to Super Telephoto

- Sensor-Shift OIS 3.0 and High-Precision VCM Actuators: Engineering Out Hand Shake

- Next-Gen IMU Sensors: 6-Axis Motion Data at kHz Speeds for Real-Time Correction

- Deep Learning Video Stabilization: From Optical Flow to Semantic Scene Understanding

- AI Zoom and Diffusion Upscaling: Reconstructing Detail Beyond Optical Reach

- Low-Light Telephoto Without Blur: HDR Fusion, Noise Reduction, and Motion Freezing

- 2026 Flagship Showdown: iPhone 17 Pro Max, Galaxy S25 Ultra, Pixel 10 Pro XL, and More

- Robotic and AI-Driven Camera Concepts: The Rise of the Robot Phone

- DockKit and AI Gimbals: Turning Smartphones into Intelligent Tracking Systems

- Professional Workflows: Log Video, ProRes, Multicam, and External SSD Recording

- Human Factors and Ergonomics: Physics-Based Techniques to Minimize Shake

- Market Data and Industry Growth: Metalens Expansion and Consumer Demand Trends

- 参考文献

Why Telephoto and Stabilization Have Become the New Smartphone Battleground in 2026

In 2026, the true competition in smartphones is no longer about megapixels alone. It is about how far you can see—and how steady that vision remains. Telephoto capability and stabilization have become the defining battleground because users now demand DSLR-level reach without carrying extra gear.

According to MMD Research Institute, camera performance continues to rank among the top purchase drivers for Japanese consumers, especially for video and zoom use cases. Live events, sports, and “oshi” culture have amplified the need to capture distant subjects clearly. As a result, manufacturers are shifting from spec races to delivering a “fail-proof shooting experience” where blur and shake are no longer acceptable outcomes.

The technical reason is simple. The narrower the field of view, the more even microscopic hand movement is magnified. A tiny vibration that is invisible at 1x becomes disruptive at 10x. This physical reality has forced brands to innovate across optics, actuators, and AI simultaneously.

| Challenge | Why It Matters in 2026 | Competitive Response |

|---|---|---|

| High optical zoom (5x–10x+) | Users expect clear distant capture | Advanced periscope and ALoP systems |

| Handshake amplification | Telephoto magnifies micro-shake | Sensor-shift OIS + AI fusion |

| Low-light telephoto | Longer exposure increases blur risk | HDR stacking + AI stabilization |

Industry coverage from PCMag and TechRadar highlights that flagship devices now emphasize 5x optical zoom and beyond as standard, not luxury. Meanwhile, AI-driven zoom such as Google’s Super Resolution Zoom demonstrates that software reconstruction is now part of the optical equation. The battlefield has expanded from hardware alone to a tightly integrated computational system.

Academic research reinforces this shift. A comprehensive survey published by MDPI shows that video stabilization has transitioned from classical mechanical correction to deep learning–based prediction models. Modern devices buffer multiple frames, analyze motion vectors, and fuse gyroscope data in real time. The result is footage that appears tripod-stable even while walking.

Telephoto and stabilization now define brand identity. Apple emphasizes ecosystem-level consistency with sensor-shift systems across lenses. Samsung pushes extreme zoom ranges combined with AI reconstruction. Chinese manufacturers integrate liquid lenses and advanced periscope optics to reduce module thickness while extending reach.

Even the component market reflects this arms race. Market analyses project strong growth in metalens adoption, enabling thinner modules and lighter optical assemblies. A lighter module reduces actuator load, which directly improves stabilization precision. In other words, optical miniaturization is not only about design elegance—it enhances steadiness at long focal lengths.

In 2026, consumers no longer ask whether a smartphone can zoom. They ask whether it can zoom without compromise. The brands that win are those that treat telephoto reach and stabilization not as separate features, but as a unified system engineered to eliminate failure. That is why this domain has become the most intense and strategically important battleground in the smartphone industry.

Breaking Optical Limits: Metalens Commercialization and Ultra-Thin Camera Modules

In 2026, metalenses have finally moved beyond laboratory prototypes and into mass-produced smartphones. Instead of relying on curved glass or plastic elements, metalenses use subwavelength nanostructures to manipulate light on a flat surface. According to multiple market analyses, the mobile metalens segment reached approximately 93 million dollars in 2026, growing at a steady CAGR of 5.9 percent, marking a clear transition from early adoption to commercialization.

This shift is not incremental. It fundamentally redefines how thin a high-performance telephoto module can be. Traditional refractive lens stacks require multiple elements to correct aberrations, inevitably increasing thickness. Metalenses, by contrast, can integrate broadband chromatic aberration correction into a single metasurface, dramatically reducing module depth.

| Metric | Conventional Lens Stack | Metalens (2026) |

|---|---|---|

| Module Thickness | Several millimeters | Up to 90% reduction possible |

| Color Correction | Multiple lens elements | Single metasurface control |

| Manufacturing | Molding / polishing | 12-inch DUV lithography |

The use of semiconductor-style 12-inch DUV lithography, as reported in industry briefings around CES 2026, enables wafer-level scalability. Companies such as Metalenz and NIL Technology are leveraging existing chip fabrication infrastructure, which significantly improves uniformity and yield compared to precision glass molding.

For smartphone designers, the most visible benefit is the reduction of camera bump. Telephoto modules have historically required long optical paths, especially in periscope configurations. By replacing part of the refractive stack with a flat metasurface, engineers reduce both height and weight. A lighter optical assembly directly lowers the load on OIS actuators, improving stabilization efficiency without increasing power consumption.

Another critical commercialization vector is autofocus and under-display camera integration. Market reports highlight that 2026 deployments are not limited to main cameras; metalenses are increasingly used in compact depth sensors and autofocus modules where space constraints are extreme. Their planar geometry simplifies alignment and enables tighter packaging in foldable devices.

Looking ahead, forecasts suggest dramatic acceleration. Some projections estimate the broader metalens solutions market could reach multi-billion-dollar levels by 2032, with triple-digit CAGR in certain segments. This growth is fueled by cross-industry demand spanning AR/VR, automotive sensing, and mobile imaging.

Perhaps the most compelling signal comes from research institutions. Carnegie Mellon University has demonstrated meta-lens systems capable of maintaining sharp focus across extended depth ranges, hinting at future smartphone cameras with fewer moving parts. While still in research phases, such developments reinforce that commercialization in 2026 is not an endpoint but a platform.

In practical terms, ultra-thin telephoto modules enabled by metalenses allow manufacturers to pursue slimmer chassis designs without sacrificing optical reach. Users benefit from devices that sit flat on a desk, yet deliver high-magnification clarity previously reserved for thicker camera arrays. Breaking optical limits is no longer about adding more glass; it is about engineering light at the nanoscale.

ALoP and Advanced Periscope Systems: Achieving 10x+ Optical Zoom in Slim Bodies

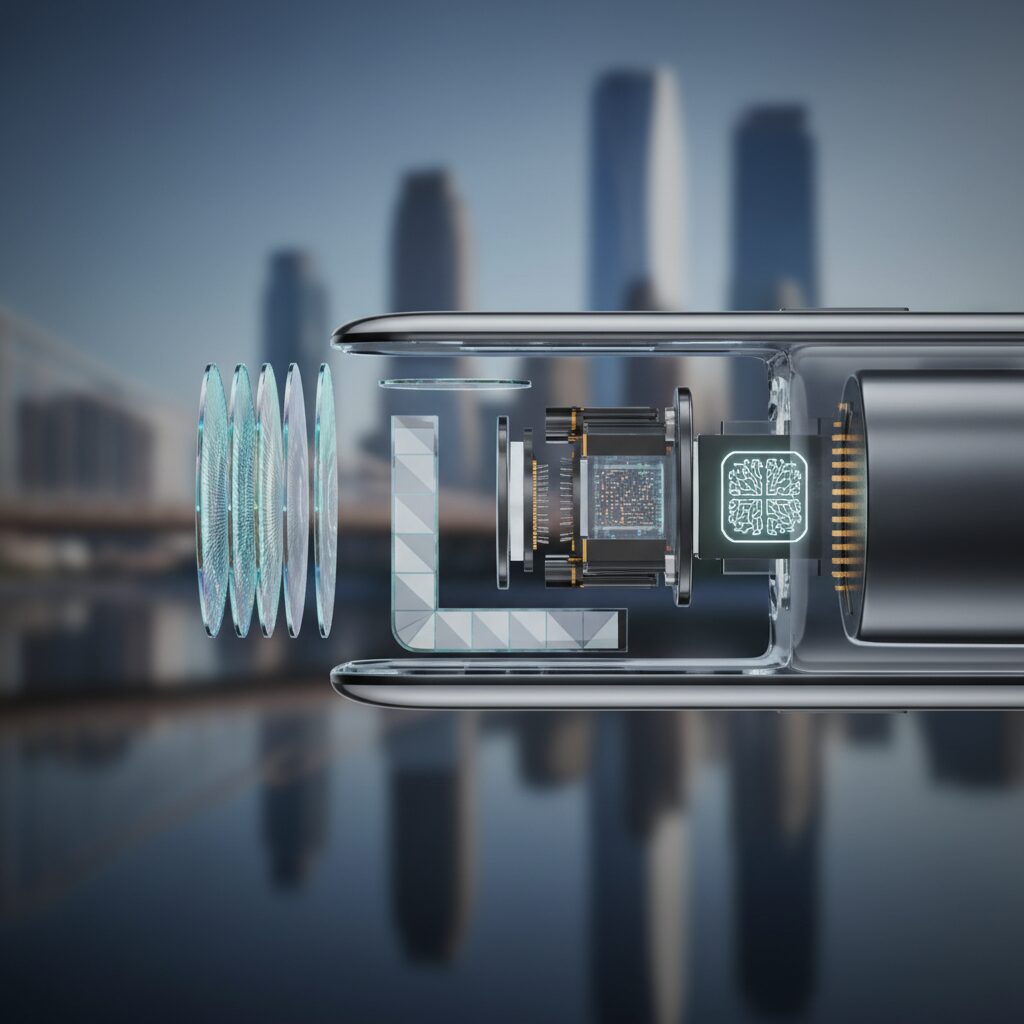

Achieving true 10x or higher optical zoom in a slim smartphone body has long been constrained by physics. A longer focal length requires a longer optical path, which traditionally meant thicker camera bumps. In 2026, ALoP (All Lenses on Prism) and advanced periscope systems have fundamentally redefined this equation.

By folding light horizontally inside the chassis and reorganizing the lens stack directly on the prism, manufacturers now secure DSLR-class reach without compromising industrial design.

Structural Differences: Conventional Periscope vs. ALoP

| Architecture | Lens Arrangement | Impact on Thickness | Zoom Capability |

|---|---|---|---|

| Traditional Periscope | Lenses stacked after prism | Thicker module | 5x–10x typical |

| ALoP | All lenses aligned on prism plane | Significantly reduced height | 10x+ optimized |

In conventional folded optics, light enters the prism and travels sideways, but multiple lens elements are still stacked vertically, increasing module height. ALoP rearranges every lens element along the prism axis itself. This geometric reconfiguration minimizes vertical stacking, which is critical for ultra-slim flagships and foldable devices.

According to industry analyses referenced by TechTimes in early 2026, this layout becomes particularly advantageous beyond 10x optical zoom, where spatial efficiency and stabilization precision must coexist. The slimmer structure also reduces mechanical inertia, directly benefiting stabilization performance.

Advanced implementations such as triple-prism optical systems in devices like Vivo X300 Pro and Oppo Find X8 Pro demonstrate how 10x lossless zoom can be achieved while maintaining competitive body thickness. The key innovation is not merely extending reach, but integrating optical length into horizontal device architecture.

Another overlooked advantage lies in stabilization dynamics. Because the prism can be driven on dual axes at high speed, manufacturers can correct micro-vibrations more rapidly than moving an entire lens stack. This becomes crucial at 10x or higher, where minimal hand movement is dramatically amplified in-frame.

Engineering papers on OIS actuator development show that actuator responsiveness improves when moving smaller, lighter optical groups. ALoP’s reduced vertical mass aligns directly with this principle. In practical terms, users experience steadier framing during handheld telephoto shots, even before AI stabilization layers are applied.

Foldable smartphones benefit disproportionately. Their expanded internal volume allows longer horizontal optical paths, making 10x+ true optical zoom feasible without a protruding module. This architectural synergy explains why several 2026 flagship foldables prioritize advanced periscope integration.

As optical zoom pushes further into double-digit magnification, the industry’s focus shifts from raw focal length to spatial engineering. ALoP and next-generation periscope systems prove that slim bodies and extreme reach are no longer mutually exclusive. Instead, they are the result of precise optical folding, prism-centric lens alignment, and actuator-aware mechanical design working as one cohesive system.

Liquid Lenses and Variable Aperture: Seamless Zoom from Macro to Super Telephoto

Liquid lens technology and variable aperture systems are redefining what “zoom” means on a smartphone in 2026. Instead of switching between physically separate lens modules, a single optical unit now adapts in real time, delivering everything from extreme close-up macro at 3 cm to super telephoto reach up to 30x.

According to industry coverage of Xiaomi’s early liquid lens implementation and subsequent refinements, the core mechanism relies on electrically controlling the curvature of a fluid interface. By precisely adjusting surface tension, the lens changes focal length without moving bulky glass elements.

This eliminates mechanical lens switching, reducing focus hunting, shock-induced blur, and latency during zoom transitions.

| Feature | Conventional Tele Module | Liquid Lens System (2026) |

|---|---|---|

| Focal Adjustment | Discrete lens switching | Continuous curvature control |

| Zoom Transition | Step-based | Seamless |

| Macro Capability | Dedicated module required | Integrated (≈3 cm) |

The practical impact is especially visible in hybrid shooting scenarios. When you move from photographing a flower at near-macro distance to capturing a distant architectural detail, the system recalibrates focal length smoothly, without the micro-jumps typical of periscope-only designs.

This continuity also benefits stabilization. Because there is no abrupt module shift, optical image stabilization actuators maintain consistent compensation parameters, avoiding recalibration delays that traditionally caused momentary blur.

The result feels closer to a parfocal cinema lens than to a typical smartphone camera stack.

Equally transformative is the integration of a variable aperture mechanism, dynamically adjustable from approximately f/1.4 to f/4.0 in leading 2026 flagships. As reported in multiple 2026 smartphone camera analyses, this range allows devices to adapt exposure strategy based on scene luminance and motion.

In bright daylight at long focal lengths, stopping down improves edge sharpness and depth consistency. In low light or when tracking fast-moving subjects, opening to f/1.4 preserves shutter speed, directly reducing motion blur.

This optical flexibility reduces overreliance on aggressive ISO boosts, preserving micro-contrast and color integrity.

From an engineering perspective, the coordination between liquid curvature control and aperture blades is computationally optimized. Real-time scene analysis predicts subject distance, luminance distribution, and motion vectors before mechanically adjusting both focal geometry and iris diameter.

Research cited in broader mobile imaging surveys indicates that this predictive control loop operates within milliseconds, ensuring that zooming while recording 4K or even 8K video does not produce exposure flicker or focus breathing artifacts.

Zoom is no longer a disruptive action; it becomes a fluid compositional gesture.

For gadget enthusiasts, the most exciting implication lies in creative control. A single compact module now replaces what previously required separate macro, mid-tele, and periscope units. That consolidation frees internal space, reduces weight, and indirectly enhances stabilization efficiency.

In real-world use—concert photography, wildlife observation, or product macro shooting—the system responds instantly, maintaining sharpness and brightness across an enormous focal spectrum.

The boundary between optical and computational zoom continues to blur, but liquid lenses and variable aperture systems ensure that the foundation remains fundamentally optical, delivering authentic detail before AI enhancement even begins.

Sensor-Shift OIS 3.0 and High-Precision VCM Actuators: Engineering Out Hand Shake

When you push beyond 5x or 10x optical zoom, even the slightest tremor is brutally amplified. In 2026, manufacturers address this not with incremental tweaks, but with a fundamental redesign of stabilization architecture. Sensor-Shift OIS 3.0 and next-generation VCM actuators work as a tightly synchronized mechanical system that neutralizes hand shake at its physical source.

Unlike traditional lens-shift OIS, Sensor-Shift OIS 3.0 moves the image sensor itself across multiple axes. In devices such as the iPhone 17 Pro Max, the sensor is driven along X, Y, Z, and rotational axes, performing corrections thousands of times per second. This high-frequency compensation is particularly effective in telephoto shooting, where angular shake translates into large frame displacement.

The engineering advantage becomes clearer when comparing approaches.

| System | Moving Element | Primary Strength |

|---|---|---|

| Lens-Shift OIS | Lens group | Compact correction for moderate shake |

| Sensor-Shift OIS 3.0 | Image sensor (multi-axis) | High-precision correction at long focal lengths |

By shifting the sensor rather than heavy lens stacks, inertia is reduced and micro-adjustments become faster and more precise. This matters at the exact moment you tap the shutter. Research on OIS actuator development published via Semantic Scholar shows that optimizing magnetic field distribution inside the actuator directly improves response speed and positional accuracy. In practice, this means tap-induced micro-vibrations are counteracted before they register as blur.

At the heart of this system is the high-precision Voice Coil Motor actuator. Recent designs apply magnetic field and geometric optimization—sometimes using genetic algorithms—to maximize flux density uniformity within extremely limited module space. The result is stronger restoring force, lower hysteresis, and improved linearity under low-frequency, large-amplitude shake, which is typical of handheld telephoto shooting.

Equally critical is the data feeding these actuators. According to Design World, modern 6-axis MEMS IMUs now support up to 20-bit resolution and output rates as high as 6.4kHz. This ultra-dense motion data allows the stabilization controller to anticipate motion rather than merely react to it. The actuator and IMU effectively form a closed-loop control system operating in milliseconds.

In real-world scenarios—concerts, sports events, or nighttime cityscapes—this engineering translates into a visible difference. Frame-to-frame jitter is dramatically reduced, rolling distortion is minimized, and telephoto video appears as if mounted on a micro-gimbal. Crucially, this stability is achieved mechanically before computational correction begins, preserving native detail and reducing reliance on aggressive digital cropping.

Sensor-Shift OIS 3.0 paired with high-precision VCM actuators does not simply “reduce shake.” It redefines handheld telephoto capture by transforming unstable human motion into controlled, counterbalanced mechanical movement—delivering DSLR-class steadiness from a device that still fits in your pocket.

Next-Gen IMU Sensors: 6-Axis Motion Data at kHz Speeds for Real-Time Correction

Real-time stabilization in 2026 smartphones is no longer driven by optics alone. At the core of this evolution are next-generation 6-axis IMU sensors capable of delivering motion data at kilohertz-level speeds. These modules continuously measure three-axis angular velocity and three-axis acceleration, enabling the camera system to react to hand tremor and micro-vibrations before they visibly degrade telephoto detail.

According to Design World, the latest MEMS-based IMUs from manufacturers such as TDK support up to 20-bit data resolution and output rates reaching 6.4kHz. This means the sensor can report motion thousands of times per second, far exceeding traditional video frame rates. The higher the sampling frequency, the earlier the stabilization pipeline can predict and counteract motion.

| Specification | Conventional IMU | Next-Gen IMU (2026) |

|---|---|---|

| Axes | 6-axis | 6-axis (enhanced calibration) |

| Data Resolution | 16-bit typical | Up to 20-bit |

| Output Rate | 1kHz class | Up to 6.4kHz |

At extreme zoom levels, even sub-degree rotations translate into dramatic on-screen shake. A 10x optical zoom effectively magnifies angular error by the same factor. With kilohertz sampling, the IMU feeds ultra-dense motion vectors into the OIS actuator and AI stabilization engine, allowing micro-corrections to occur within milliseconds. This is especially critical during shutter press, where so-called tap-induced vibration can blur high-resolution 48MP or 200MP telephoto captures.

Academic surveys on video stabilization, including work published by MDPI, emphasize that modern pipelines increasingly fuse gyroscope signals with optical flow. High-rate IMU data improves the accuracy of this fusion. Instead of reacting to blur after it appears, the system predicts motion trajectories in advance.

Thermal stability is another breakthrough. Advanced MEMS designs maintain calibration accuracy despite temperature shifts caused by prolonged 8K recording or outdoor winter shooting. This consistency ensures that the actuator receives reliable motion vectors, preventing drift or overcompensation during long takes.

Beyond stabilization, these IMUs integrate protective features such as free-fall detection. When sudden acceleration patterns are detected, the camera module can mechanically park or lock the OIS actuator, reducing damage risk. This dual role—precision sensing and hardware protection—makes the IMU a foundational component of the entire imaging stack.

For users, the result is tangible. Walking while zooming at 15x, recording handheld concerts, or capturing distant wildlife no longer produces jittery footage. What makes this possible is not only stronger motors or smarter algorithms, but the silent, kilohertz-speed stream of motion data constantly refining every frame.

Deep Learning Video Stabilization: From Optical Flow to Semantic Scene Understanding

Deep learning has fundamentally redefined how video stabilization works on smartphones. According to the comprehensive survey published by MDPI, the field has shifted from classical motion models and handcrafted filters to fully data-driven neural architectures that learn stabilization directly from large-scale video datasets. This transition is particularly critical in telephoto recording, where even micro-vibrations are amplified dramatically.

Traditional pipelines relied heavily on optical flow to estimate pixel-level motion between consecutive frames. While effective for global camera shake, optical flow alone struggles in scenes with moving subjects, parallax, or depth variation. Deep learning models now treat stabilization as a spatiotemporal prediction problem rather than a purely geometric correction task.

The key breakthrough is that modern systems no longer stabilize pixels—they stabilize scenes.

From Optical Flow to Learned Motion Estimation

| Approach | Core Mechanism | Limitation in Telephoto Use |

|---|---|---|

| Classical Optical Flow | Frame-to-frame vector estimation | Sensitive to occlusion and depth shifts |

| Kalman / Motion Filtering | Trajectory smoothing | Over-smoothing of intentional motion |

| Deep Neural Stabilization | End-to-end spatiotemporal learning | Requires high NPU performance |

In early smartphone implementations, optical flow vectors were fed into smoothing filters to remove high-frequency jitter. However, in telephoto scenarios—such as 10x or higher zoom—foreground motion and background motion diverge significantly. Classical algorithms often misinterpret subject movement as camera shake, producing the infamous “jello” or wobble artifacts.

Deep learning models address this by learning motion priors from thousands of stabilized–unstabilized video pairs. Instead of assuming uniform motion across the frame, convolutional and transformer-based networks estimate both local and global motion fields simultaneously.

As highlighted in recent real-time mobile AI challenges hosted on platforms such as CodaLab, competitive models process multi-frame buffers—often 10 frames or more—to predict a future-stable frame trajectory under strict latency constraints.

Semantic Scene Understanding

The most important evolution in 2026 systems is semantic stabilization. Neural networks now segment each frame into categories such as sky, buildings, faces, vehicles, or dynamic foreground elements before motion correction is applied.

This enables differentiated treatment of motion. Static background structures are rigidly stabilized, while moving subjects retain natural motion blur and trajectory continuity. The result is stabilization that feels cinematic rather than mechanically frozen.

For example, when recording a telephoto video of a runner in front of distant architecture, the system locks the horizon and building edges while allowing the athlete’s stride to remain fluid. Classical optical flow systems frequently failed in this scenario due to conflicting motion vectors.

By fusing IMU gyroscope data with learned visual motion representations, modern architectures achieve higher positional accuracy than either modality alone. The IMU provides high-frequency inertial signals, while the neural network corrects drift and resolves ambiguities caused by rolling shutter or occlusion.

Another critical advancement is predictive frame synthesis. Instead of aggressively cropping to remove borders caused by motion compensation, neural models generate plausible peripheral content, minimizing field-of-view loss. This is particularly valuable in telephoto capture, where every degree of angle matters.

In practical smartphone implementations, all of this processing runs on dedicated NPUs in real time. The convergence of high-throughput neural accelerators and optimized deep models enables 4K and even 8K stabilized video without external hardware.

From a technological standpoint, the journey from optical flow vectors to semantic scene graphs represents a paradigm shift. Video stabilization has evolved from a mechanical correction layer into an intelligent perception system that understands what should remain still and what should move. That distinction defines the cutting edge of mobile telephoto video in 2026.

AI Zoom and Diffusion Upscaling: Reconstructing Detail Beyond Optical Reach

Digital zoom once meant simple cropping, amplified noise, and painfully obvious blur.

In 2026, that assumption no longer holds true. AI Zoom powered by diffusion-based upscaling reconstructs detail that optics alone cannot physically resolve.

This is not magnification. It is probabilistic reconstruction guided by learned visual priors.

According to industry analyses such as TechTimes and comparative testing reported by PCMag, flagship smartphones now combine optical zoom, sensor cropping, multi-frame fusion, and generative diffusion models into a unified pipeline.

Instead of stretching pixels, the system predicts missing high-frequency detail by referencing patterns learned from millions of training images.

This approach allows 12MP tele captures to be reconstructed into images with detail comparable to 48MP output in supported modes.

| Method | How Detail Is Created | Limitation |

|---|---|---|

| Traditional Digital Zoom | Pixel interpolation | Soft edges, amplified blur |

| Multi-Frame Super Resolution | Frame alignment + merge | Motion sensitive |

| Diffusion Upscaling (2026) | AI-based detail reconstruction | Model-dependent realism |

Google’s Super Resolution Zoom in devices such as the Pixel 10 Pro XL demonstrates this clearly. Even at 30x zoom, fine text remains legible because the AI fills in edge structure and texture while referencing gyroscope data to counteract micro-blur.

Samsung’s AI-enhanced Space Zoom similarly combines high-resolution sensors with generative refinement to preserve architectural lines and feather details at extreme magnifications.

These systems do not guess randomly. They are constrained by optical input, motion vectors, and semantic scene recognition.

Diffusion models play a particularly important role in texture realism. Unlike earlier GAN-based upscalers, diffusion approaches iteratively denoise a latent representation, gradually converging toward a plausible high-resolution output.

Research in real-time mobile super-resolution challenges, such as those documented by CodaLab competitions, shows that temporal consistency and motion-aware conditioning significantly reduce flicker and artifact generation.

This is critical for telephoto video, where amplified handshake can destroy perceived sharpness.

AI Zoom also compensates for micro-vibrations that escape mechanical stabilization.

When IMU data detects slight rotational jitter, the reconstruction model adjusts edge orientation during upscaling, effectively restoring perceived clarity lost during capture.

This fusion of physics-based sensing and generative modeling is what pushes detail beyond pure optical reach.

However, it is important to understand that diffusion upscaling reconstructs plausible detail, not ground-truth information.

The output remains bounded by captured light data, sensor quality, and scene complexity.

In well-lit telephoto conditions, the results approach optical performance. In low-light extremes, reconstruction still competes with noise and motion blur.

For gadget enthusiasts, the implication is profound. Optical zoom defines reach, but AI Zoom defines usability.

Diffusion Upscaling transforms extreme zoom from emergency utility into a creative tool, enabling handheld capture of distant signage, wildlife, or stage performers with clarity previously reserved for dedicated telephoto lenses.

It represents the moment when software stops assisting optics and begins extending it.

Low-Light Telephoto Without Blur: HDR Fusion, Noise Reduction, and Motion Freezing

Low-light telephoto photography has long been the most unforgiving scenario for smartphones. When you zoom in, the field of view narrows, light decreases, and even microscopic hand movements become dramatically amplified. In 2026, however, the fusion of HDR processing, AI-driven noise reduction, and motion freezing technologies has fundamentally changed what is possible.

The key is no longer a single long exposure, but intelligent multi-frame synthesis executed in milliseconds. Modern flagships capture multiple short exposures instead of relying on a slow shutter. These frames are then aligned using gyroscope data and optical flow analysis before being merged into a single sharp image.

According to coverage by TechTimes on 2026 smartphone imaging trends, leading devices now combine real-time HDR Scene Optimization with AI stabilization pipelines. This allows bright highlights, deep shadows, and distant subjects to be preserved simultaneously—even at 5x to 10x optical zoom.

| Challenge in Low-Light Telephoto | 2026 Computational Solution | Practical Impact |

|---|---|---|

| Slow shutter blur | Multi-frame HDR with short exposures | Sharper handheld night zoom shots |

| High ISO noise | AI-based noise modeling & temporal stacking | Cleaner textures without waxy artifacts |

| Subject motion | Motion-aware frame selection | Frozen faces and readable details |

Noise reduction has also evolved beyond simple smoothing. Deep learning models trained on millions of low-light samples now distinguish between random sensor noise and meaningful fine detail. As reported in MDPI’s survey on modern video stabilization and AI imaging, data-driven approaches outperform classical filtering by preserving edges while suppressing chroma noise.

In telephoto night scenes—such as concerts or city skylines—this matters enormously. Instead of blurring away distant signage or facial features, AI analyzes spatial frequency patterns and reconstructs texture using learned priors. The result is detail retention without the “watercolor” effect common in earlier generations.

Motion freezing represents the third pillar. Rather than depending purely on faster shutter speeds, 2026 devices apply semantic segmentation to detect moving subjects. Background regions may be merged across multiple frames, while the subject is selected from the sharpest individual exposure.

This hybrid approach is particularly effective in night sports or live events. A performer under a spotlight can be frozen sharply, while surrounding shadows are dynamically expanded through HDR fusion. The dynamic range improvements—such as the 110dB capture capability highlighted in recent flagship analyses—ensure highlights are not clipped during this process.

Importantly, these processes occur on-device through dedicated NPUs. Real-time processing allows users to preview stabilized, HDR-enhanced telephoto frames before capture. This feedback loop reduces shooting uncertainty and increases keeper rates in challenging light.

Low-light telephoto photography in 2026 is therefore no longer a gamble. Through synchronized HDR fusion, AI noise suppression, and motion-aware compositing, smartphones transform unstable, dim environments into sharp, high-contrast images—without requiring tripods or external lighting.

The convergence of computational intelligence and telephoto optics has effectively neutralized blur as the defining weakness of night zoom photography.

2026 Flagship Showdown: iPhone 17 Pro Max, Galaxy S25 Ultra, Pixel 10 Pro XL, and More

The 2026 flagship battlefield is no longer defined by megapixels alone. It is defined by how far you can zoom, how steady the frame remains, and how intelligently hardware and AI collaborate in real time. iPhone 17 Pro Max, Galaxy S25 Ultra, and Pixel 10 Pro XL represent three distinct philosophies competing for imaging supremacy.

Each device answers the same question differently: how do you deliver DSLR-class telephoto stability in a pocket-sized form?

| Model | Telephoto Hardware | Stabilization & AI Strength |

|---|---|---|

| iPhone 17 Pro Max | 48MP, 4x optical, 8x hybrid | 3rd-gen sensor-shift OIS, Apple Log 2, DockKit integration |

| Galaxy S25 Ultra | 50MP periscope (5x), 200MP main | 100x Space Zoom, Galaxy AI reconstruction |

| Pixel 10 Pro XL | 48MP, 5x optical | 30x AI Zoom, Video Boost 8K, Super Resolution Zoom |

Apple focuses on consistency across the entire camera stack. By standardizing 48MP sensors across lenses, the iPhone 17 Pro Max minimizes tonal and exposure shifts during zoom transitions. Its third-generation sensor-shift OIS moves the image sensor itself along multiple axes at extremely high frequencies, reducing micro-jitters that typically appear in handheld telephoto video. According to industry testing summarized by PCMag and other reviewers, this results in unusually stable handheld 4x footage without aggressive cropping.

Samsung, by contrast, plays the reach game. The Galaxy S25 Ultra pairs a 5x optical periscope lens with AI-driven 100x Space Zoom. Rather than relying purely on optics, it leverages high-resolution capture and neural reconstruction to restore detail lost at extreme magnification. As deep learning–based stabilization research published in MDPI indicates, multi-frame prediction significantly reduces perceived shake in high-zoom scenarios. Samsung applies this principle aggressively, making distant architecture and wildlife shots surprisingly usable.

Google’s Pixel 10 Pro XL prioritizes computational intelligence. Its 30x AI Zoom and Super Resolution Zoom combine optical data with learned detail synthesis. The device buffers multiple frames, analyzes motion vectors, and digitally corrects micro-blur before output. In challenging lighting, Video Boost 8K enhances dynamic range while AI stabilization smooths walking footage without over-cropping. Google’s strength lies in software-driven correction layered on precise gyro and IMU data streams.

The philosophical divide becomes clear at high magnification. Apple optimizes mechanical precision and ecosystem control. Samsung maximizes optical reach combined with bold AI reconstruction. Google leans into neural interpretation and semantic scene analysis.

For gadget enthusiasts who demand telephoto excellence, the decision hinges on use case. Controlled cinematic capture and color consistency favor Apple. Extreme-distance clarity favors Samsung. Intelligent correction in unpredictable environments favors Google.

The 2026 flagship showdown is not about who zooms farther—it is about who stabilizes reality most convincingly.

Robotic and AI-Driven Camera Concepts: The Rise of the Robot Phone

In 2026, the idea of a “robot phone” is no longer science fiction but an emerging design direction that redefines what a camera module can be. Instead of relying solely on internal OIS, sensor shift, and AI stabilization, manufacturers are beginning to explore physically articulated camera systems that move independently from the phone body.

This marks a shift from computational correction to mechanical autonomy. The camera does not just compensate for shake after it happens. It actively repositions itself in real time, guided by AI-driven scene recognition and motion prediction.

According to reports surrounding Mobile World Congress 2026, Honor’s upcoming Robot Phone concept introduces a fold-out robotic camera arm designed to track subjects and counteract hand movement through physical motion control. This approach combines robotics, computer vision, and multi-modal AI into a single imaging architecture.

| Feature | Conventional Flagship | Robot Phone Concept |

|---|---|---|

| Stabilization | OIS + AI EIS | Physical robotic arm + AI |

| Subject Tracking | Digital crop + tracking | Motorized lens repositioning |

| Framing Adjustment | User hand movement | Autonomous camera movement |

The key innovation lies in decoupling the camera’s orientation from the user’s grip. Even if the device tilts or shakes, the robotic module can maintain a stable horizon and locked subject framing. This principle resembles gimbal physics, but instead of being an accessory, it becomes an integrated robotic subsystem.

Industry observers note that this concept aligns with broader trends in AI-powered tracking seen in smartphone gimbals and DockKit-enabled ecosystems. However, the robot phone internalizes that intelligence. The camera can analyze skeletal movement, facial orientation, and velocity vectors, then reposition itself proactively rather than reactively.

This transforms the smartphone from a passive imaging device into an active filming partner. For creators, it means smoother solo shooting, automated follow shots, and stable telephoto capture without external rigs.

From an engineering standpoint, robotic stabilization also reduces the computational burden required for aggressive digital cropping. Because physical correction happens before the frame is captured, more of the sensor’s native resolution can be preserved. In high-magnification scenarios, this mechanical advantage becomes especially valuable.

There are design trade-offs. Moving components introduce durability concerns, energy consumption challenges, and spatial constraints within increasingly thin devices. Yet advances in compact actuators and precision motor control, already proven in OIS actuator research published on Semantic Scholar, suggest that miniaturized motion systems can achieve high responsiveness within tight tolerances.

The broader implication is philosophical as much as technical. Traditional smartphones assumed that stability must come from either steady hands or software compensation. Robotic camera concepts challenge that assumption by embedding motion intelligence directly into hardware.

As AI models grow more predictive—anticipating subject direction before movement peaks—the synergy between robotics and neural processing could enable cameras that reposition milliseconds ahead of motion. In that scenario, the “robot phone” becomes not just stabilized, but anticipatory.

For gadget enthusiasts watching the evolution of mobile imaging, this represents the next frontier: cameras that do not merely see and correct, but move, decide, and collaborate with the user in real time.

DockKit and AI Gimbals: Turning Smartphones into Intelligent Tracking Systems

In 2026, DockKit and AI-powered gimbals are redefining what a smartphone can do. Instead of relying solely on in-body stabilization, users can now transform their devices into intelligent, self-tracking camera systems that rival dedicated production gear.

Apple’s DockKit framework operates at the OS level, allowing motorized stands and gimbals to communicate directly with the iPhone’s native camera app, FaceTime, and even third-party social platforms. According to Imaging Resource, this system-level integration eliminates the need for proprietary apps, dramatically lowering friction for creators.

This seemingly subtle shift has profound implications. Tracking is no longer an “add-on feature” but a core camera capability embedded into the ecosystem.

| Device | Key Feature | Tracking Capability |

|---|---|---|

| Insta360 Flow 2 Pro | DockKit native support | 360° infinite rotation, Deep Track 4.0 |

| DJI Osmo Mobile 7P | ActiveTrack 7.0 | Multi-subject AI tracking |

| Belkin Auto-Tracking Stand Pro | MagSafe charging | Face and body 360° tracking |

For telephoto shooting, this integration is especially powerful. At higher focal lengths, even minor framing errors become exaggerated. AI gimbals continuously analyze subject position and motion vectors, adjusting pan and tilt motors in milliseconds to maintain composition.

The Insta360 Flow 2 Pro, introduced as the first DockKit-compatible stabilizer, pairs hardware rotation with Deep Track 4.0 algorithms. Its Active Zoom Tracking maintains subject lock even beyond 15× zoom, where manual reframing would typically cause jitter or loss of focus.

DJI’s Osmo Mobile 7P extends this concept by leveraging drone-derived tracking logic. ActiveTrack 7.0 recognizes multiple subjects and predicts trajectory, not just position. This predictive layer reduces overshoot and micro-corrections, resulting in footage that feels intentionally directed rather than mechanically stabilized.

Belkin’s Auto-Tracking Stand Pro demonstrates another dimension: stationary intelligence. Designed for live streaming and workshops, it rotates 360 degrees while charging via MagSafe. This makes long-form telephoto streaming sessions viable without battery anxiety or manual repositioning.

What makes these systems truly intelligent is their fusion of on-device AI and mechanical actuation. The smartphone’s NPU identifies faces, bodies, or moving objects, while the gimbal’s motors execute smooth counter-rotations. The result is hybrid stabilization that combines digital prediction with physical correction.

As mobile photography trends in 2026 increasingly emphasize ecosystem integration, smartphones are evolving into modular camera platforms. With DockKit-enabled accessories, users are no longer just stabilizing footage—they are automating cinematography.

For gadget enthusiasts who demand precision in telephoto capture, this convergence means one thing: your smartphone is no longer a passive imaging device. It actively observes, predicts, and moves with your subject, turning everyday shooting into an intelligent tracking experience.

Professional Workflows: Log Video, ProRes, Multicam, and External SSD Recording

In 2026, smartphone telephoto shooting is no longer limited to capture. It has evolved into a fully integrated professional workflow that rivals dedicated cinema cameras.

With support for Log video, ProRes, synchronized multicam control, and direct external SSD recording, flagship devices now function as modular production systems rather than standalone phones.

According to industry analysis from ShiftCam Gear and coverage by PCMag, this shift marks the moment when mobile imaging crossed from “high-quality convenience” into true production-grade territory.

Log Video and ProRes: Maximum Post-Production Flexibility

Log recording formats such as Apple Log 2 preserve extended dynamic range and flatter tonal curves, allowing greater latitude in color grading.

When combined with ProRes or ProRes RAW, videographers gain access to high bit-depth recording optimized for professional editing environments like Final Cut Pro and DaVinci Resolve.

This means telephoto footage retains highlight detail, shadow texture, and color information that would otherwise be clipped in standard video profiles.

| Format | Primary Advantage | Use Case |

|---|---|---|

| Apple Log 2 | Extended dynamic range | Color grading flexibility |

| ProRes | High-quality intra-frame codec | Professional editing workflow |

| ProRes RAW | Sensor-level data retention | Advanced cinematic production |

For telephoto shooters, this is especially critical. Compression artifacts and tonal clipping are more noticeable at long focal lengths, where fine textures and atmospheric depth define image quality.

Log and ProRes together ensure that distant architectural details, stage lighting at concerts, or wildlife highlights remain gradable in post-production.

Live Multicam: Studio-Level Coordination

One of the most transformative developments is Live Multicam control. Multiple smartphones can now be wirelessly synchronized and managed from a single iPad interface.

Each device can be assigned a different focal length, including telephoto, while maintaining synchronized recording and consistent exposure profiles.

This eliminates the traditional need for bulky multi-camera rigs in interviews, events, or live performances.

Timecode alignment ensures seamless editing, drastically reducing post-production complexity. For creators covering sports events or live shows, telephoto angles can be captured simultaneously with wide establishing shots, all within a unified ecosystem.

External SSD Recording: Removing Storage Bottlenecks

High-bitrate ProRes recording generates massive data throughput, particularly when shooting 4K or 8K telephoto footage.

Direct recording to external SSDs solves thermal and storage constraints while enabling longer continuous takes.

Industry reports highlight that bypassing internal storage not only increases recording duration but also streamlines file transfer directly into professional editing systems.

This is particularly valuable for telephoto work, where moments are unpredictable. Wildlife movement, stage performances, or sports sequences cannot be paused for storage management.

By combining Log capture, ProRes encoding, multicam synchronization, and SSD-based recording, creators gain a stable, scalable workflow built for demanding scenarios.

In 2026, the smartphone is no longer just capable of professional output. It is structurally integrated into professional production pipelines.

Human Factors and Ergonomics: Physics-Based Techniques to Minimize Shake

Even with cutting-edge OIS and AI stabilization, the final layer of shake control depends on human biomechanics. In high-magnification telephoto shooting, a 1 mm hand movement can translate into a dramatic shift in framing. Understanding how your body generates and transmits vibration is essential if you want consistently sharp results.

Research on perceived vibration strength in mobile devices indicates that device weight and vibration frequency significantly affect muscular response. According to studies published on ResearchGate, heavier devices can trigger stronger compensatory muscle contractions, especially at lower frequencies. These micro-contractions often introduce subtle tremors that are amplified at 5x or 10x zoom.

From a physics perspective, your arms act as levers. The longer the unsupported distance between your hands and torso, the greater the torque generated by small movements. Bringing your elbows inward reduces the lever arm and shortens the vibration pathway.

Camera retailers such as Kitamura emphasize that tucking your elbows into your ribcage effectively increases structural support points. This transforms your upper body into a semi-rigid frame, lowering angular displacement during shutter activation.

| Technique | Physical Principle | Effect on Telephoto Stability |

|---|---|---|

| Elbows tucked | Reduced lever length | Less angular amplification |

| Exhale before shutter | Lower thoracic motion | Reduced vertical oscillation |

| Two-handed grip | Distributed load | Lower localized tremor |

Breathing control is another overlooked factor. During inhalation, chest expansion subtly shifts arm position. Professional shooters often release the shutter at the end of a gentle exhale, when thoracic movement is momentarily minimized. This timing reduces vertical oscillation, particularly noticeable at 8x and beyond.

Shutter interaction itself can induce “tap shake.” Many smartphones are engineered to trigger the shutter upon finger release rather than initial touch. Keeping your finger lightly resting on the screen and lifting it smoothly avoids impulse force transmission through the display layer.

Acceleration sensor research used in structural vibration analysis demonstrates that fixation method dramatically affects measurement stability. When applied to photography, this insight suggests that bracing against walls, railings, or poles effectively converts your body from a free-moving system into a constrained one, lowering degrees of freedom.

Panning with a moving subject also leverages relative motion physics. By synchronizing your movement with the subject’s velocity, you reduce relative displacement while allowing background blur to form naturally. This technique is especially powerful in sports or pet photography at medium telephoto ranges.

Finally, grip pressure should remain firm but not rigid. Excessive tension increases high-frequency tremor caused by muscle fatigue. A balanced, relaxed hold combined with skeletal support creates a low-frequency, damped system that modern OIS and AI algorithms can correct more effectively.

In 2026’s ultra-high-resolution telephoto environment, ergonomics is no longer a beginner’s tip but a performance multiplier. When biomechanics and stabilization hardware work in harmony, even handheld 10x shots can approach tripod-level clarity.

Market Data and Industry Growth: Metalens Expansion and Consumer Demand Trends

The expansion of metalens technology is no longer a laboratory narrative but a measurable market shift in 2026. According to market analyses cited by Archive Market Research and Research and Markets, the mobile metalens segment reached approximately 93 million USD in 2026, growing at a CAGR of 5.9% through the early 2030s. This steady rise reflects how flat optics are transitioning from pilot adoption to scalable commercial deployment in smartphones.

More striking is the mid‑term projection. Forecast data referenced in industry reports indicates that the broader metalens solutions market could reach 3.3 billion USD by 2032, with an exceptionally high projected CAGR of over 80% between 2025 and 2032. This gap between current revenue and long‑term forecast signals a structural inflection point rather than incremental growth.

| Year | Mobile Metalens Market Size | Growth Indicator |

|---|---|---|

| 2024 | 83 million USD | Early commercialization phase |

| 2026 | 93 million USD | CAGR 5.9% (2025–2033) |

| 2032 (Forecast) | 3.3 billion USD | CAGR 86.1% (2025–2032) |

This expansion is tightly linked to consumer demand trends in imaging performance. MMD Research Institute reports that Japanese users continue to rank camera performance as a top priority when upgrading smartphones. In particular, demand has shifted from pure megapixel counts to stability in zoom and video scenarios, especially for social media and live-event recording.

As SNS video usage rises, users increasingly expect stable handheld zoom while walking. Stabilized telephoto capture has become a purchasing trigger, not just a specification bonus. This behavioral change directly benefits component categories such as metalenses, advanced OIS actuators, and high-resolution IMUs.

Industry observers including PCMag and TechRadar note that flagship differentiation in 2026 centers on integrated optical stacks rather than isolated features. When brands promote periscope zoom, AI stabilization, and compact modules enabled by flat optics, they are responding to measurable consumer sensitivity to camera protrusion, device thickness, and video smoothness.

From a supply-chain perspective, the adoption of semiconductor-style lithography processes for metalens production lowers long-term marginal costs once scale is achieved. This creates a feedback loop: higher adoption reduces cost per unit, enabling mid-range diffusion, which further accelerates volume growth.

The result is a dual-engine expansion model. On the demand side, culturally driven zoom usage, creator economy growth, and short-form video consumption push expectations upward. On the supply side, scalable nanofabrication and ecosystem partnerships push costs downward.

2026 therefore represents a transition year in which consumer expectations for professional-grade telephoto stability converge with manufacturable flat-optics technology. The market data does not merely describe growth; it illustrates the alignment of user psychology, component innovation, and industrial scaling that defines the next phase of mobile imaging.

参考文献

- TechTimes:Smartphone Cameras 2026: How AI and Advanced Features Outperform Megapixels

- PCMag:The Best Camera Phones We’ve Tested for 2026

- Design World:IMU sensors bring optical image stabilization to more mobile devices

- MDPI:Video Stabilization: A Comprehensive Survey from Classical Mechanics to Deep Learning Paradigms

- Imaging Resource:Ultimate Smartphone Gimbals Buyer’s Guide (2025)

- Huawei Central:Honor to break decade-old design with robot camera phone in 2026

- WebProNews:Carnegie Mellon Meta-Lens Camera Delivers All-Depth Sharp Focus